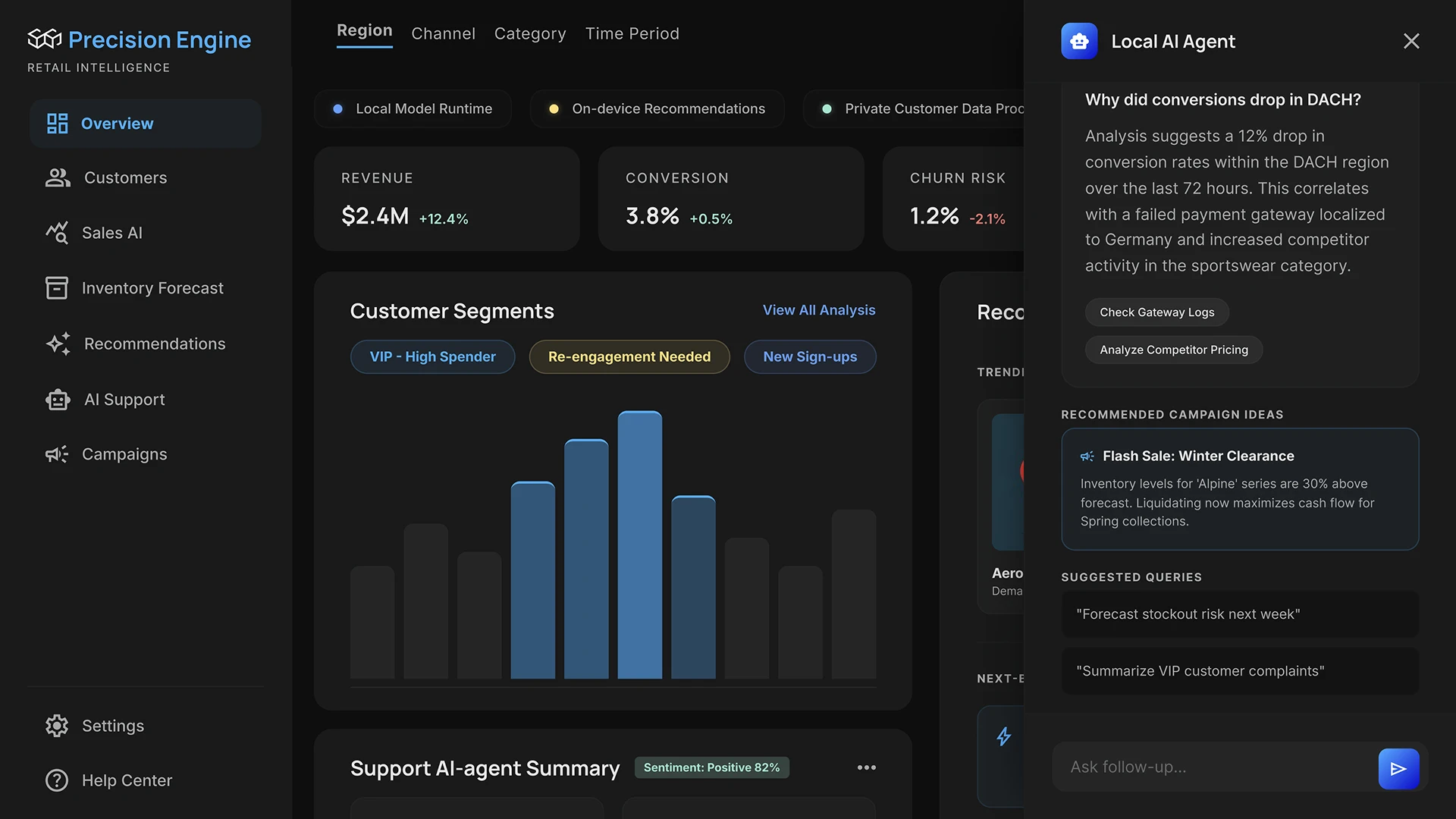

Companies are increasingly integrating AI into work processes: document processing, data analysis, internal assistants, and automated support. But when working with real-world data and other sensitive information, a question often overlooked is: where exactly does it go?

When an employee asks GPT-4 a question about a client contract or sends fragments of internal reports to the cloud, the data leaves the company's perimeter. Cloud models store queries, use them to improve systems, and are subject to the jurisdiction of the country where the servers are hosted. For businesses handling personal data, financial information, or trade secrets, this isn't an abstract risk – it's a direct violation of security policies or regulatory requirements.

At the same time, cloud AI can become expensive with regular use. Hundreds of thousands of tokens per month per team is a significant expense without clear control over what exactly is being billed.

This is where the demand that we see increasingly arises: local AI is needed.

Why Companies Are Switching to Local AI

The move to on-premises solutions is a business decision that is based on several specific reasons.

- Data confidentiality. Internal documents, negotiations, and client databases must all remain internal. The on-premises model doesn't send requests to external servers: it works with data where it's stored.

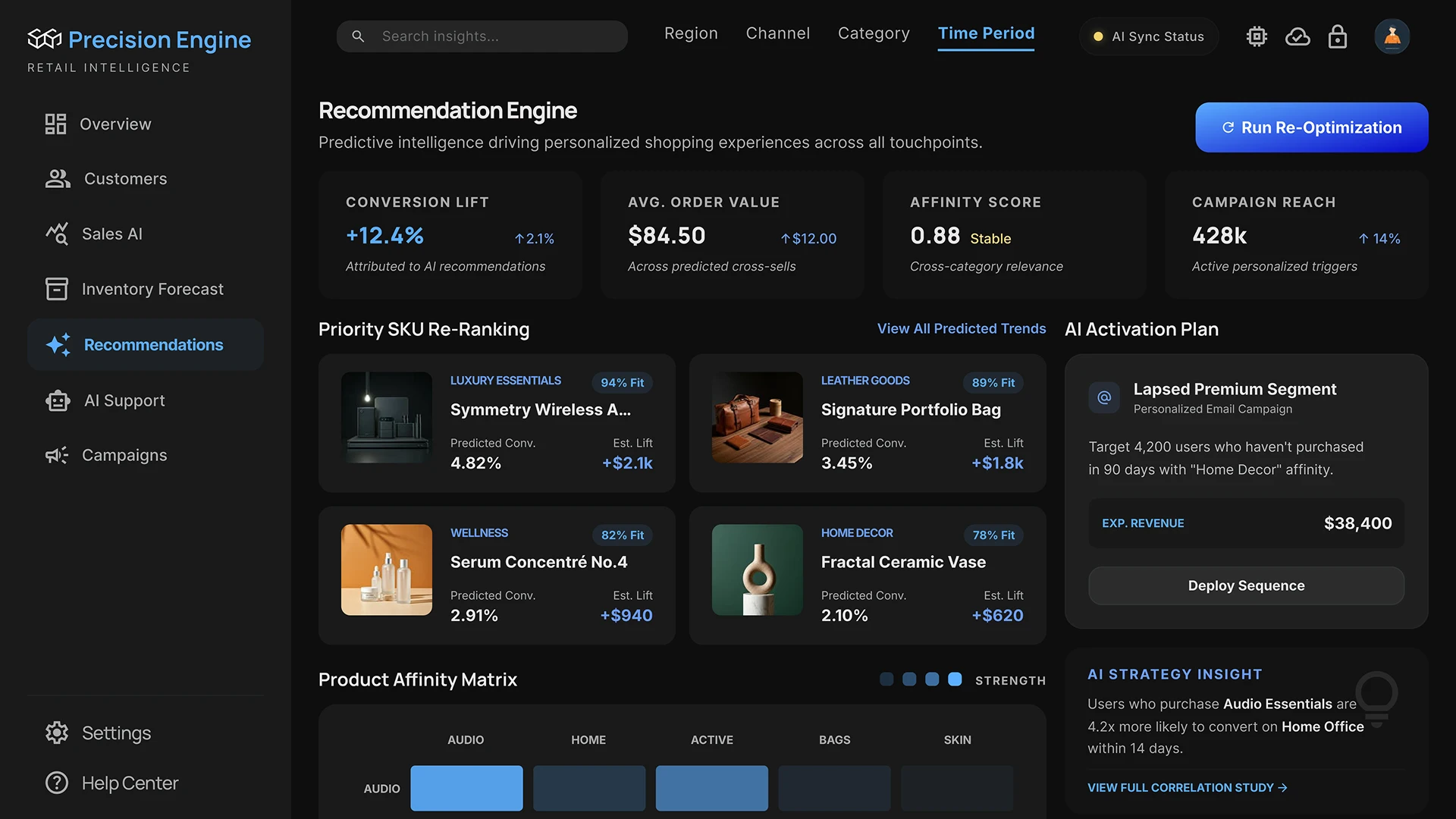

- Working with a corporate context. The cloud model knows nothing about your company: not about products, processes, or internal documentation. The on-premises system, however, is built on top of your data and answers your specific questions.

- Reduced operating costs. Fixed hardware costs versus escalating cloud costs.

- Control over the system. You determine which model is used, how it's configured, what data is connected to it, and who has access. No changes to terms of use, no sudden updates to model behavior.

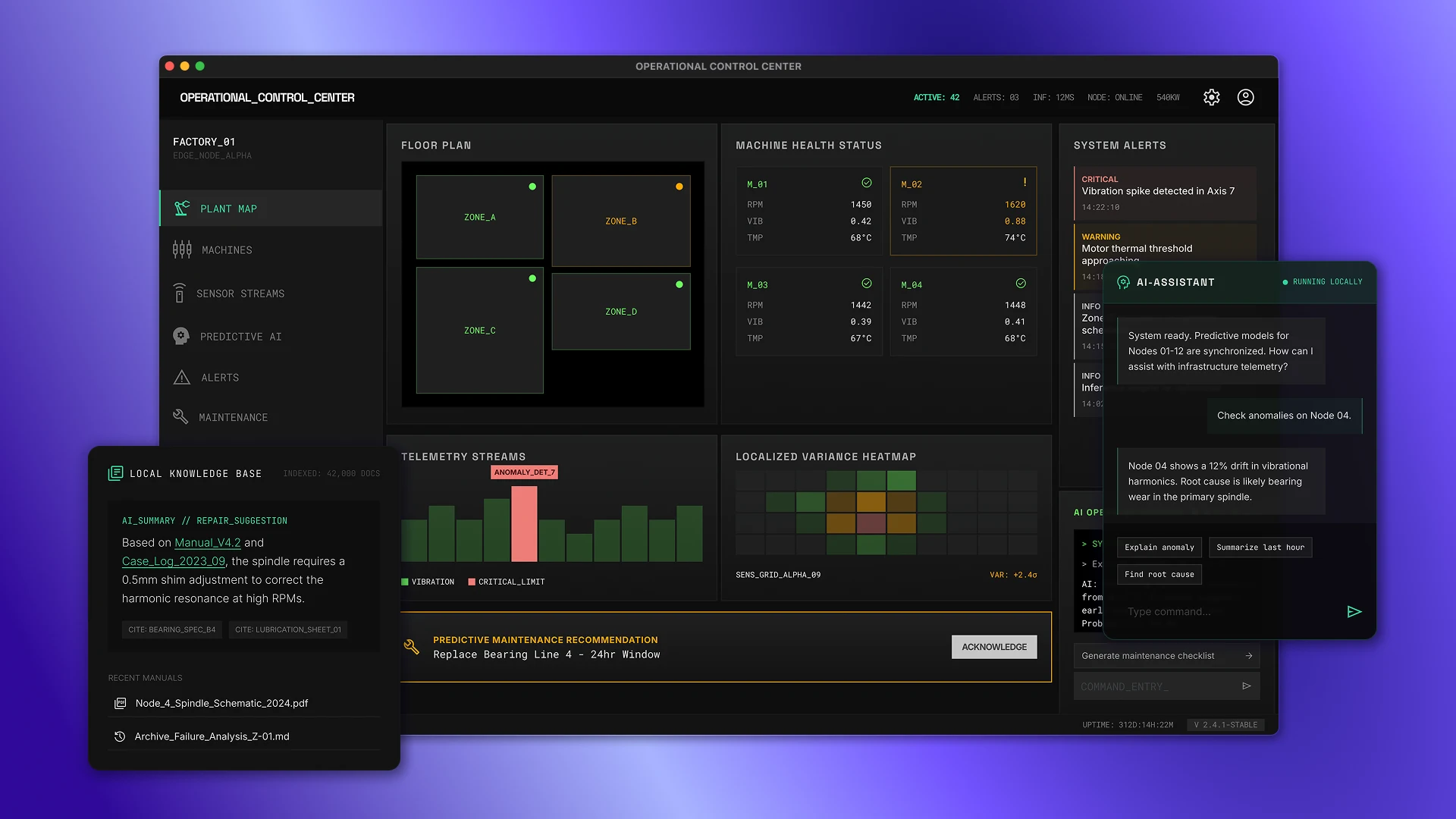

In practice, it works like this: a company connects its CRM, knowledge base, and internal policies to a local AI system. A manager asks a question and receives an answer based on real corporate data, without a single request to the external network.

What is local AI infrastructure?

It's important to understand from the outset: local AI is not a single program or a single model. It's a system of several interconnected components.

The language model (LLM) is the core of the system. It accepts a text query, understands the context, and generates a response. Models of varying scale can be run locally: from compact (7–13 billion parameters) to more complex (70 billion and above). The choice of model depends on the task and the available hardware.

A vector database is a knowledge repository. Documents, regulations, correspondence, CRM data – all of this is converted into numerical representations (vectors) and indexed. When a user asks a question, the system searches for relevant fragments and passes them to the model along with the query.

RAG (Retrieval-Augmented Generation) is a context-aware mechanism. Instead of the model "inventing" a response from general knowledge, RAG pulls specific data from the database and generates a response based on it. This is what makes enterprise AI truly useful.

AI agents are the active components of the system. Unlike a simple chatbot , an agent can perform actions: search for information, invoke tools, access external APIs, and perform multi-step tasks. An agent is an AI that doesn't just respond, it also acts.

All four components together constitute local AI.

Why the Mac Mini is the Right Choice for a Local AI System

Typically, when people talk about running AI on-premises, they imagine expensive servers with powerful NVIDIA graphics cards. Such solutions do work, but they're expensive, require cooling, are noisy, and require maintenance.

The Mac Mini on Apple Silicon is a simpler and more affordable option.

The M-series chips (M1, M2, M3, M4) are designed differently: the processor and graphics share the same memory. This eliminates the need to "shuffle" memory between different components, as in traditional systems with a dedicated graphics card. Everything runs faster and more efficiently, without unnecessary lag.

The Mac Mini M4 Pro with 64GB of memory is significantly cheaper than a professional GPU server, consumes 30–40W under load, takes up less desk space, and operates silently. At the same time, it can run models with 30–70 billion parameters at a speed acceptable for business workloads.

For a startup or mid-sized company, this means a fully-fledged on-premises AI server without a server room and without enterprise-level capital expenditures.

Solution architecture

This is the key block – what distinguishes a real working system from a set of randomly installed tools.

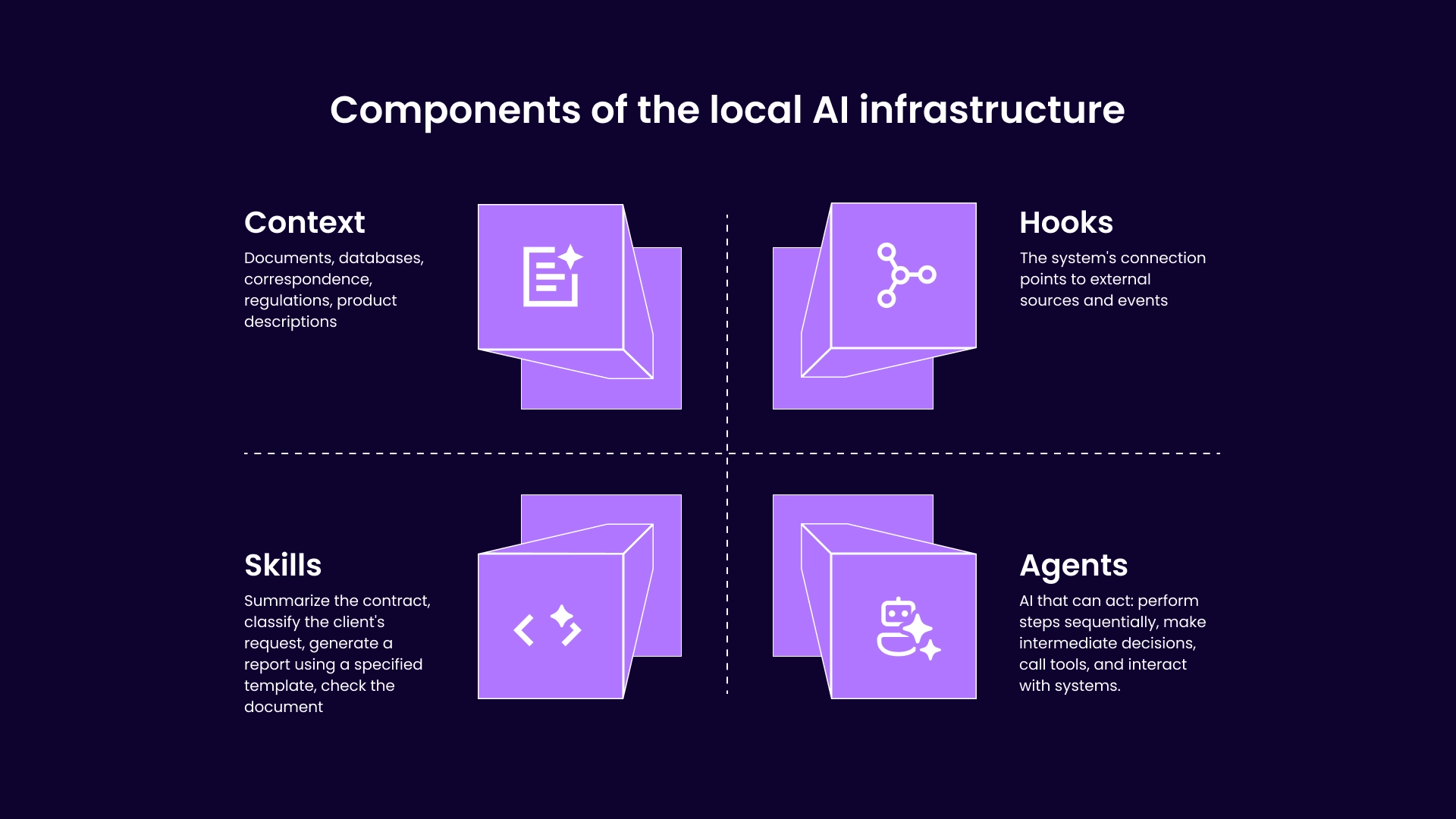

The local AI infrastructure is built on four levels.

Context

This is what the model knows about your company. Documents, databases, correspondence, regulations, product descriptions – everything necessary for the AI to respond concretely, not abstractly.

Context is generated through the RAG pipeline: documents are uploaded, split into fragments, converted into vectors, and indexed. For each query, the system automatically extracts relevant fragments and feeds them to the model.

Without a properly built context layer, the model responds based on general knowledge. With one, it responds based on your data.

Skills

This is a set of specialized capabilities that the system is equipped with: summarizing a contract, classifying a client's request, generating a report based on a specified template, and checking a document for compliance with regulations.

Each skill is a separately configured module: with its own prompt, logic, and data sources. Skills are not universal – they are tailored to specific company tasks.

Hooks

These are the system's connection points to external sources and events. The CRM updates a client's status, and the AI receives a notification. A new document arrives, and automatic processing is triggered. An employee sends a request via Slack, and the system responds.

Hooks transform AI from a tool that must be accessed manually into a component of a business process that operates independently.

Agents

The top level of the architecture. An agent is an AI that can not only respond but also act: sequentially perform steps, make intermediate decisions, invoke tools, and interact with systems.

For example, an agent receives a request to generate a report – they search the knowledge base for the necessary data, access the CRM via API, create the structure, generate the text, and send the result to the appropriate channel. All this without human intervention.

Four levels work together. Remove one, and the system loses either precision, flexibility, or integration.

How this is implemented in practice

Below is a sequence of steps you can take from scratch to deploy a local AI system.

1. Preparation of the environment

First, make sure the device is compatible:

- Preferably 16 GB of combined memory (32 GB is better if you plan to work with larger models)

- Enough free space (at least 20–50 GB for models and data)

Next:

1.1 Update macOS to the latest version

1.2. Install Ollama

- download from the official website

- install as a regular application

1.3. Check that everything works:

- open terminal

- Run the command: ollama run llama3

1.4 If the model has downloaded and responds, the base environment is ready.

At this stage, you already have a local AI, but without a connection to your data.

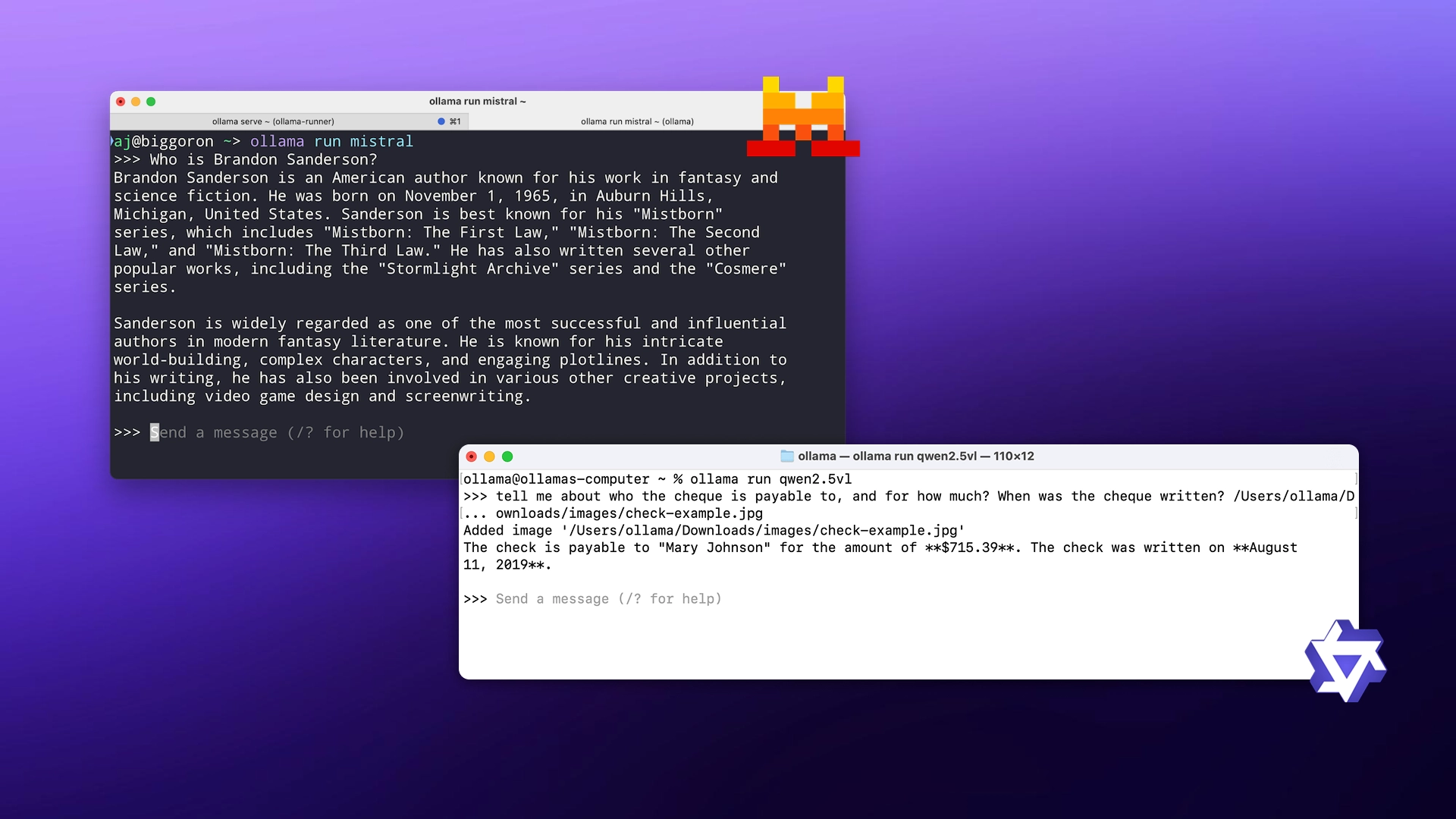

2. Selecting and running models

Now we need to figure out which models to use.

Start simple:

What to do:

- Download 1-2 models for testing:

ollama run mistral

ollama run qwen - Test them on your tasks:

- ask a question

- ask to write a text

- give a piece of the document

Please note:

- response speed

- text quality

- “understanding” the request

After that, select one main model and, if necessary, one additional one.

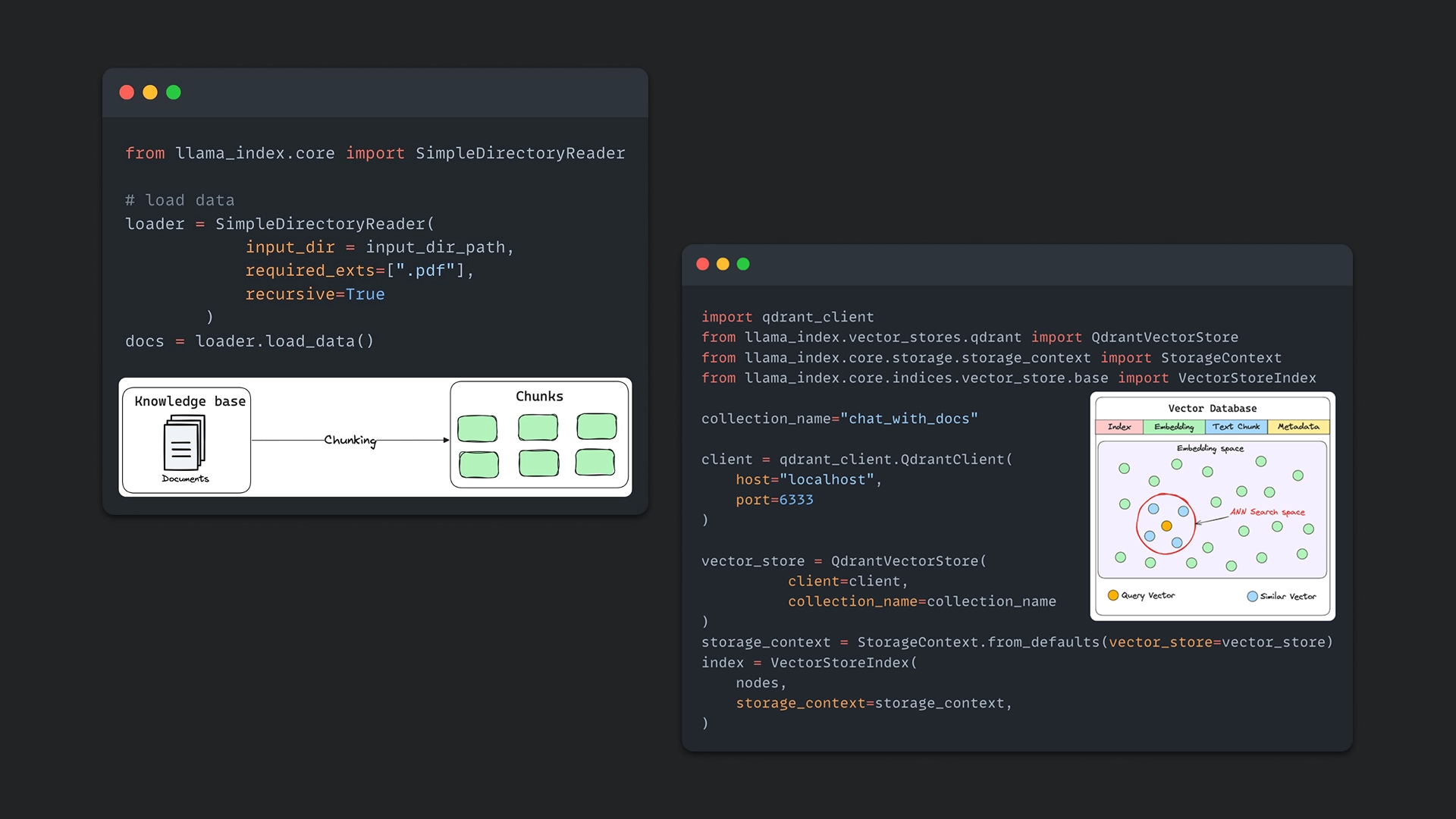

3. Connecting the knowledge base

Now let's add your data.

Step 1: Prepare documents

Gather everything the model needs to work with:

- PDF files

- notes

- instructions

- correspondence (if necessary)

Step 2: chunking

Documents are divided into small fragments (usually 300–800 words). This is necessary so that the model can accurately locate the required sections.

Step 3: Creating a vector base

Install one of the databases:

Next:

- Convert text to embeddings (vectors)

- Save them to the database

- Link each fragment to the source document

In practice, this is done through Python or ready-made libraries (for example, LangChain or LlamaIndex ).

4. RAG Setup

Now you need to "link" the model and the database. This assumes you already have Ollama installed or configured to access another LLM via the API.

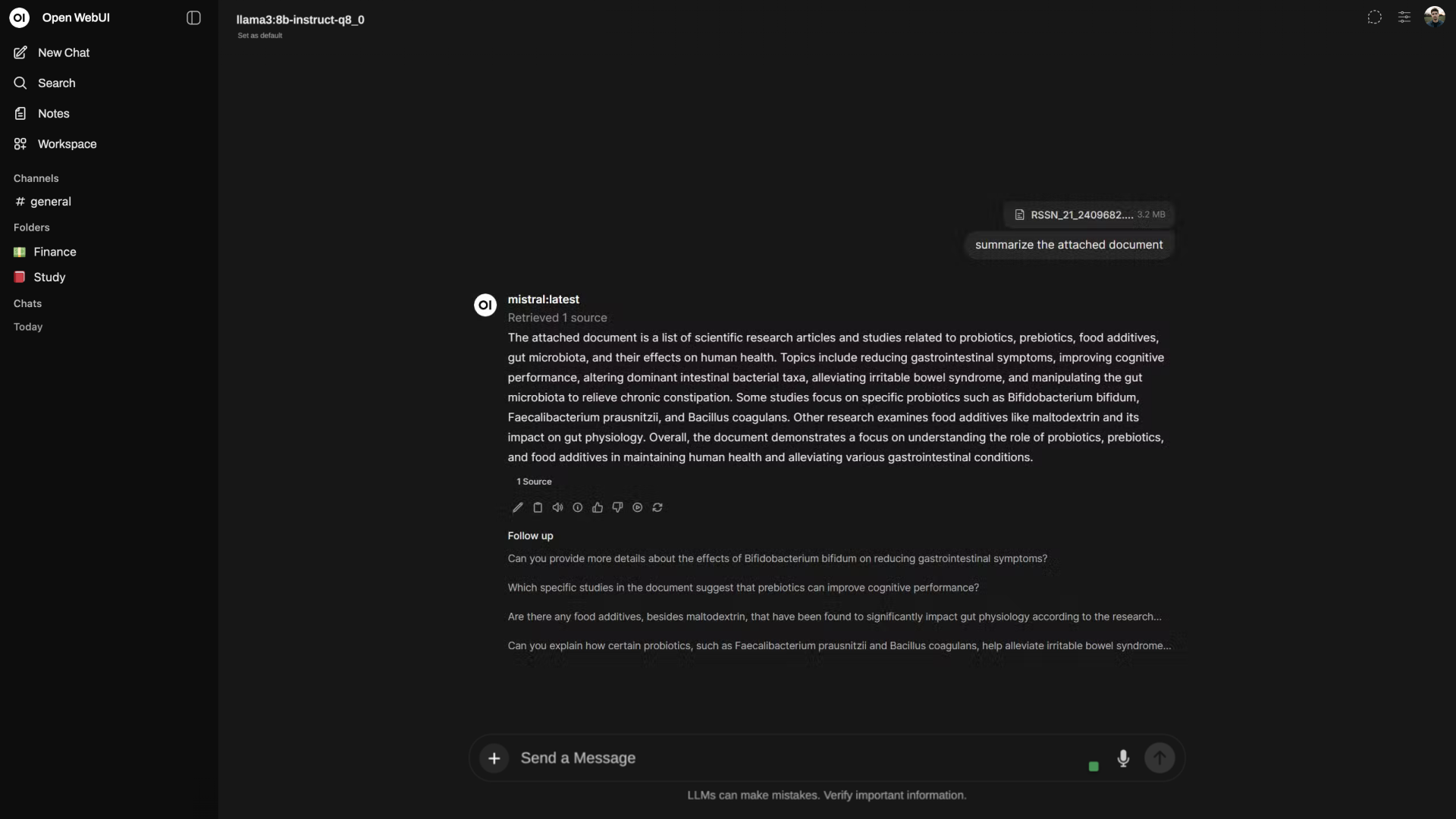

Step 1: Set up the interface for work

To avoid working through the terminal, you need an interface:

- Open WebUI is a more flexible option

- LM Studio – easier to get started

What to do:

- Install the selected tool

- Run it

- Make sure it “sees” your model (via Ollama or API)

Step 2: Select and activate the model

In the interface:

- Open the list of models

- Select one that is already installed (for example):

- Mistral

- Llama

- Qwen

- Set it as the default chat mode.

Check: The model should answer common questions.

Step 3: Add Documents (Knowledge Base)

In the interface, find the section:

- “Knowledge” / “Documents” / “Files”

Next:

- Upload files (PDF, DOCX, TXT)

- Wait for processing

The system automatically:

- will break the text into fragments

- will create a vector index (usually based on Chroma or similar)

Step 4: Enable RAG usage

Now it is important to link the model and documents:

- Open Chat/Assistant settings.

- Find the parameters:

- Use knowledge base

- Retrieval

- “Context”

- Turn them on.

- Specify which documents to use.

Without this step, RAG will not work even if the files are downloaded.

Step 5: Set the answer rule

In the assistant settings (System Prompt), add:

Please respond only based on the documents you've uploaded. If you don't have enough information, please let us know.

This reduces the likelihood of fictitious answers.

Step 6: Customize your search settings

If the interface allows, check:

- Top K (number of fragments) → 3–5

- Search type → similarity or hybrid

If there are no such settings, use the default values.

Step 7: Test your work

Do two tests:

- A question that is answered in the documents

- A question that has no answer

Expected result:

- in the first case – the exact answer according to the data

- in the second – an honest "no information"

5. Launching agents

Step 1: Open the interface and proceed to creating an assistant

Launch Open WebUI. Find the "Assistants," "Agents," or "Workspaces" section in the menu and click the "Create Assistant" button.

Step 2: Define the agent's task

In the “Instructions” or “System Prompt” field, describe what it should do.

Example: answering customer questions, analyzing documents, or assisting with reports.

Step 3: Connect the Knowledge Base (RAG)

Find the “Knowledge” or “Documents” block and select the previously uploaded files.

If the documents have not yet been added, upload them here.

This will allow the agent to respond based on your information, not just “general knowledge.”

Step 4: Add tools if needed

Some interface versions have a “Tools” or “Functions” section.

There you can connect:

- API

- external services

- additional data sources

If this block is missing, you can work only with the knowledge base; this is sufficient for most tasks.

Step 5: Set the work logic

The logic is written in text in the same “Instructions” field.

Example:

- If you have a question about documents, use the knowledge base.

- If there is no information, please report it.

- don't make up answers

Step 6: Customize the response format

Also, use the instructions to specify what the result should look like.

For example:

- short answer

- structured answer (points)

- JSON (if needed for integrations)

Step 7: Save the assistant and test it

Save your settings, open a chat with this assistant and ask some real questions.

Check:

- does he use documents

- isn't he making up answers?

- does it follow the format?

Step 8: Adjust as needed

If the answers are weak:

- clarify instructions

- reformulate the logic

- Check that the knowledge base is actually connected

- If necessary, change the model in Ollama

What is the result?

After completing all the steps, you have:

- locally running model

- knowledge base with your data

- search engine for these data (RAG)

- agents for specific tasks

And all this works without the cloud and without transmitting data to the outside.

If you don't want to configure the system yourself, you can hire an experienced engineer to scale, integrate, and optimize the model.

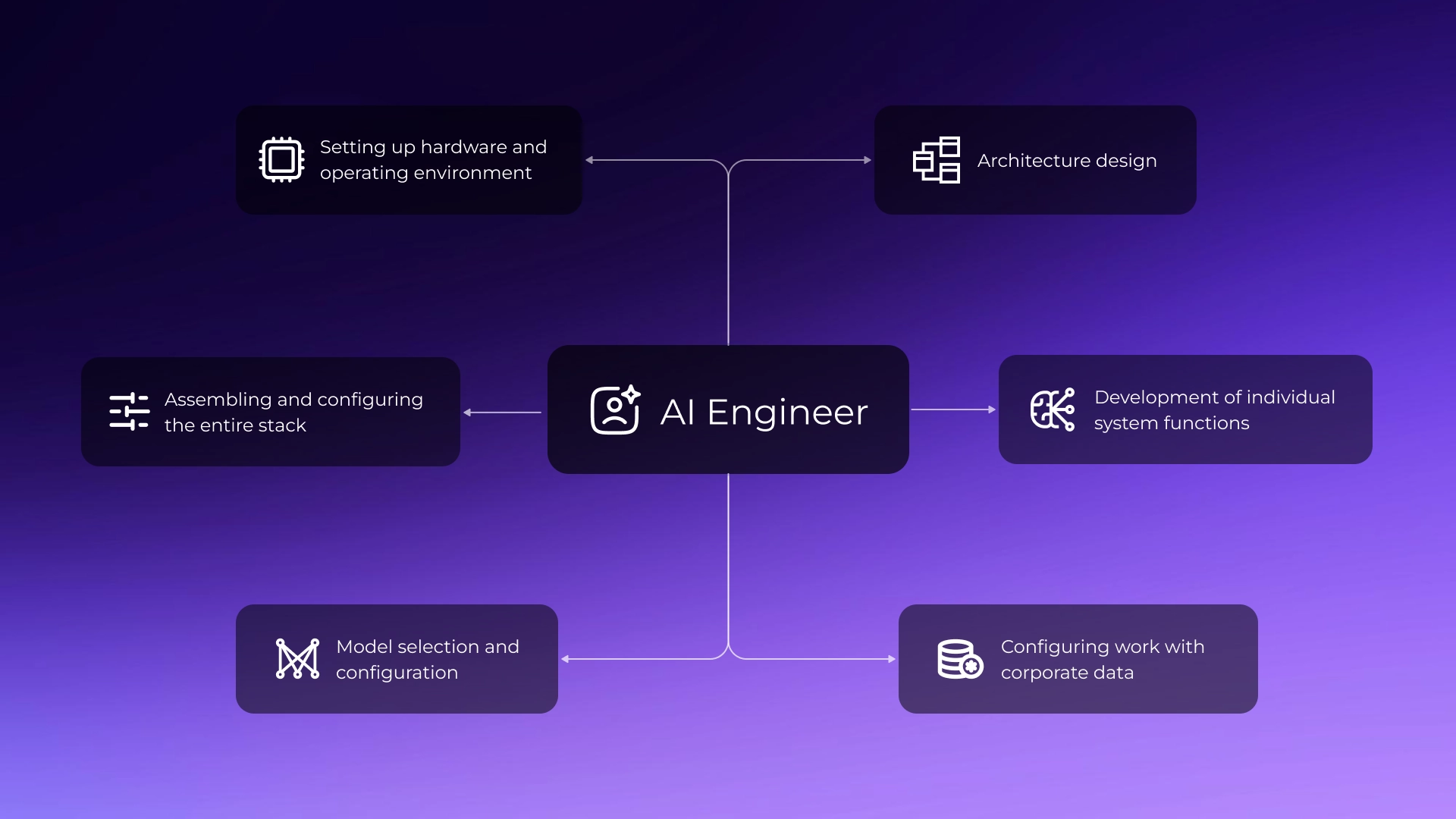

What does a local AI engineer do?

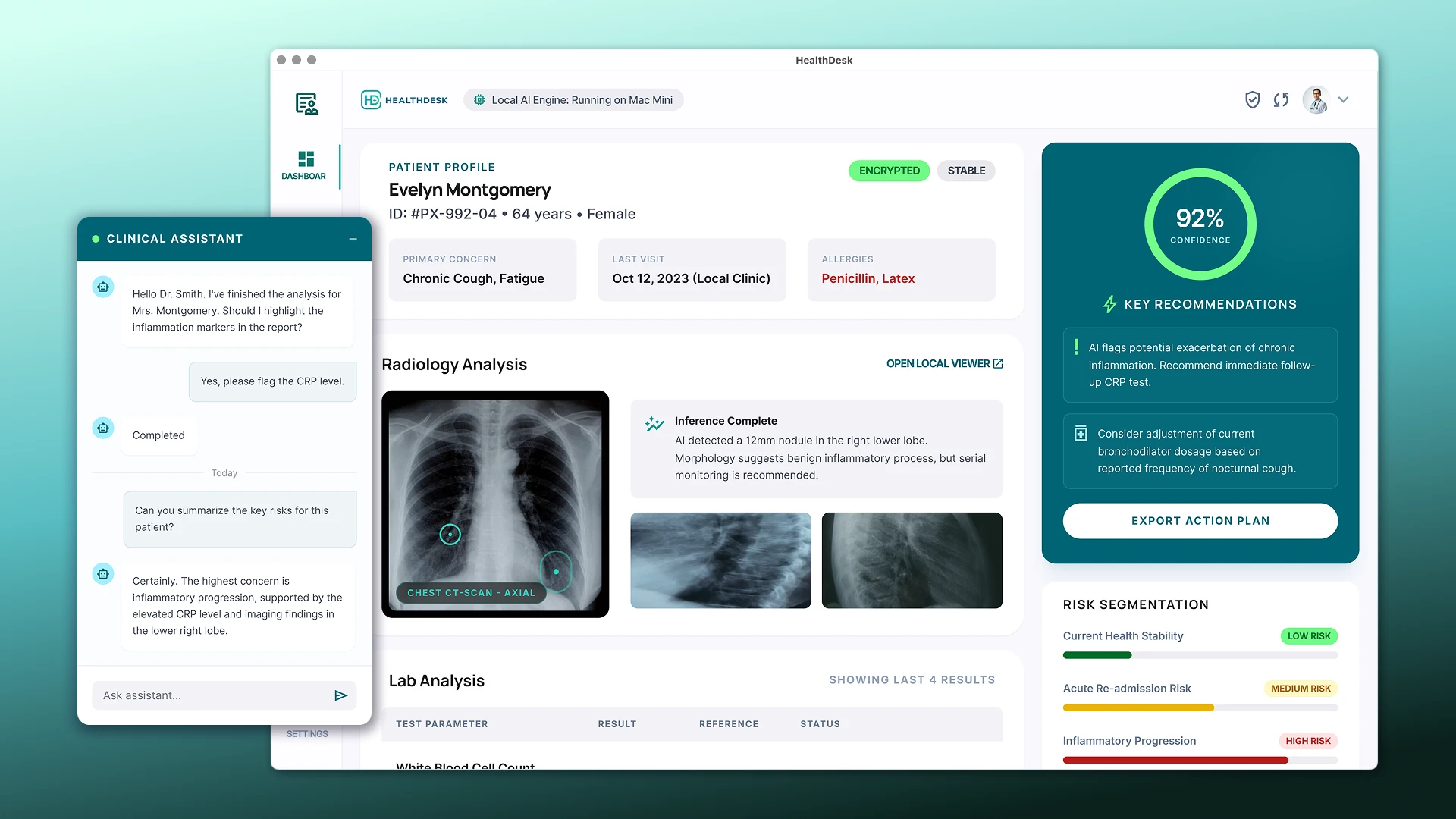

A local AI engineer is neither a general developer nor a data scientist. They are someone at the intersection of three fields: they understand how hardware works, how language models are structured, and how to assemble a working business product from a set of tools. Such a specialist is rare, precisely because each of these fields is deeply ingrained.

- Setting up the hardware and operating environment. A Mac Mini serving as an AI server requires extensive preparation. An engineer configures the system to ensure stable operation under constant load: correctly distributing memory across multiple models, ensuring access to the system within the company network, setting up temperature and load monitoring, and organizing backups. Automatic startup of all services after a reboot and system logging are also considered, ensuring that any unexpected situation is immediately clear.

- Assembling and configuring the entire stack. As you already know, local AI is not a single program, but several interconnected components. An engineer deploys each one and connects them together: an environment for running models, an interface for accessing the system from other applications, a database for storing corporate knowledge, and, if necessary, tools for processing tasks in the background. Each component is configured for a specific workload, rather than running with default settings.

- Model selection and tuning. Model selection requires verification; ratings and reviews won't help. An engineer verifies how a specific model performs for the company's specific needs: testing response quality on real-world examples, measuring performance on target hardware, and assessing memory consumption. If necessary, the model is "compressed" – this allows for a more powerful version to be launched within available resources with controlled loss of quality.

- Architecture design. This is the most critical part of the job. The engineer considers how data flows throughout the system: where it comes from, how it's processed, who has access to what. Growth logic is laid out in advance: what will change in the system if the number of users triples or the volume of documents increases tenfold. Failure behavior is also considered – how the system recovers if one component stops working.

- Development of individual system functions. Each function – contract analysis, request classification, report generation – is a separately designed module. The engineer determines what data is needed for each function, how it should process the request, and the format in which the result should be returned.

- Setting up work with corporate data. The quality of the system's responses directly depends on how the knowledge base search is configured. The engineer selects the optimal method for breaking documents into fragments, configures the search logic for relevant parts, and determines how the found information is included in the model query. This is where problems most often arise for those attempting to build the system themselves: everything works technically, but the responses are inaccurate or off-topic.

- Post-launch refinement. The system doesn't reach operational quality immediately. After launch, a testing cycle begins on real-world scenarios: errors are recorded, settings are adjusted, and the logic is refined. An engineer builds a process for evaluating the quality of responses – some checks are automated, while others require the participation of people with deep knowledge of the subject area. This is an iterative process, and it is part of the implementation, not a consequence.

Examples of use

AI assistant for internal support

Industry: IT company, SaaS

Size: 300–350 employees

Problem: HR and IT spent up to 30% of their time on repetitive questions from employees – about regulations, approval processes, and internal instructions. The information existed in documents, but it was difficult to find independently.

Solution: A local AI assistant is deployed on a Mac Mini, connected to the company's internal document database. Employees communicate with it via Slack using natural language and receive accurate responses with a link to the source. The data remains within the company's perimeter. The workload for HR and IT has been reduced, and the speed of new employee onboarding has increased.

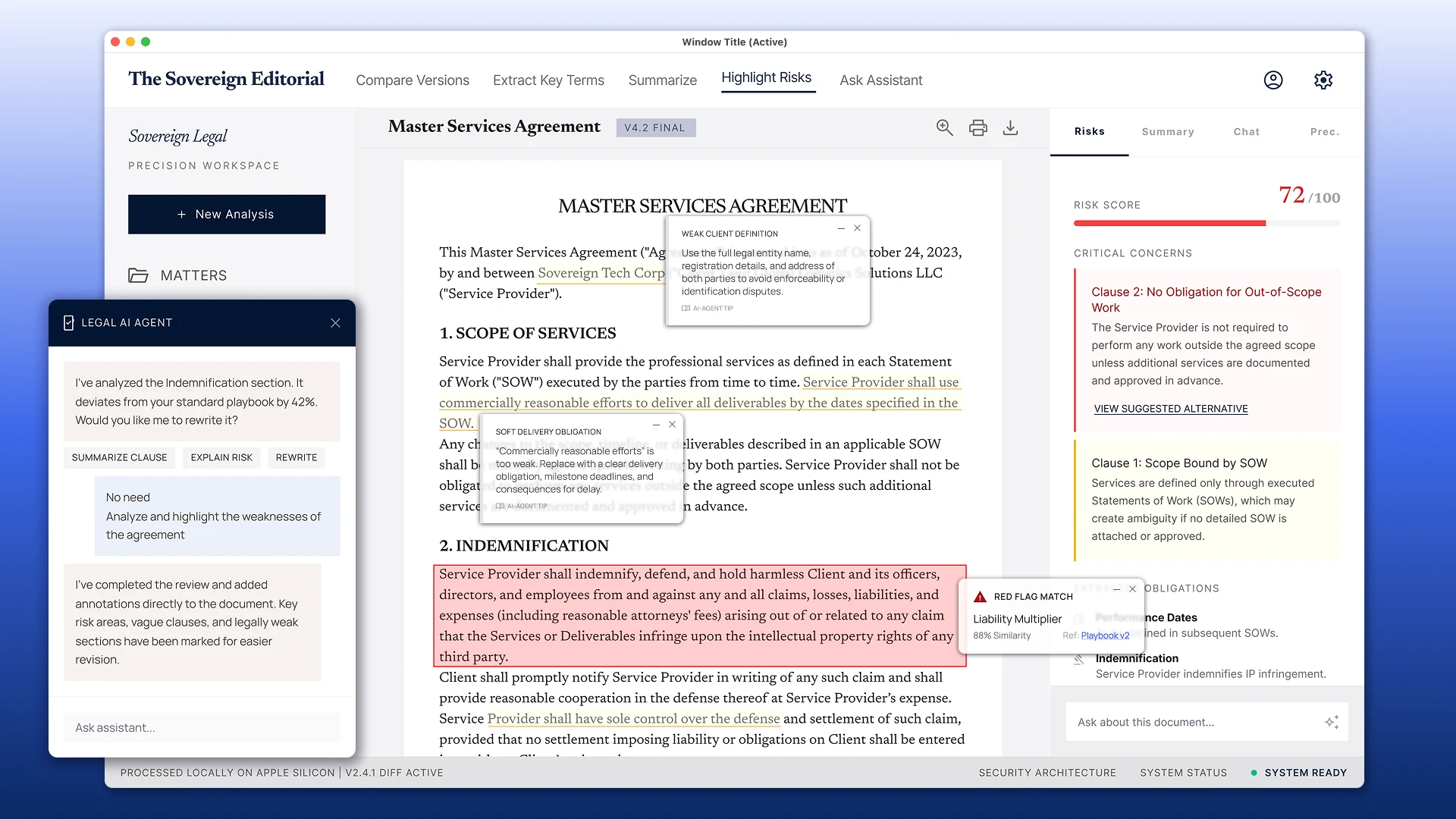

Analysis of contracts and documents

Industry: Distribution, wholesale trade

Size: 200–300 employees

Problem: The legal department processed up to 50 supply contracts per month. Initial review of each document took between 40 minutes and an hour and a half, including checking payment terms, parties' responsibilities, and any non-standard clauses.

Solution: A local AI system for document analysis is deployed on a Mac Mini. The model extracts key terms, compares them to an internal template, and generates a structured summary with notes on disputed points. Initial analysis time has been reduced to 5–7 minutes. The final decision rests with the lawyer, but with a ready-made analytical database. All documents are processed locally.

CRM with an AI layer

Industry: B2B Sales, Professional Services

Size: 40–60 employees

Problem: The CRM had accumulated a history of hundreds of clients – messages, calls, deals, and rejections. Before each call, managers spent time manually reviewing the history, but patterns in the data went unnoticed.

Solution: An AI agent was deployed on a Mac Mini, integrated with the CRM via API. Before a call, the agent automatically generates a client summary: interaction history, open questions, and the context of the last meeting. After the call, the agent records the results and suggests the next step. Over time, the system began to identify patterns of successful deals across segments. Call preparation time was reduced from 15–20 minutes to 2–3.

Local AI vs. Cloud AI

| Parameter | Local AI | Cloud AI |

|---|---|---|

| Confidentiality | Data does not leave the company | Data is processed on external servers |

| Price | Fixed investment in hardware | Variable costs increase with volume |

| Quality of models | Limited to locally running models | Access to the most powerful models (GPT-4o, Claude 3.5) |

| Speed of implementation | Requires setup and time | Quick start via API |

| Flexibility of customization | Full control | Limited by provider capabilities |

| Scalability | Limited by iron | Scalable easily |

When does an on-premises solution pay for itself? Rough guide: if a company spends €2,000–€3,000 per month on cloud AI (API costs plus tools), a Mac Mini M4 Pro with customization will pay for itself in 6–12 months. With higher loads, it will pay for itself sooner. With lower loads, the cloud may be more cost-effective.

For tasks where privacy is critical, the calculation is different: a cloud solution may simply be unacceptable, regardless of cost.

Many companies are moving towards a hybrid architecture: on-premises for sensitive data and high-frequency tasks, and the cloud for tasks that require the maximum model capacity.

Limitations and nuances

It would be dishonest not to say this outright.

Not everything can be run locally. The most powerful models (GPT-4o, Claude 3.5/3.7 Sonnet, Gemini Ultra) require colossal computational resources and are only accessible via API. Locally run models are stronger in some tasks and weaker in others. For tasks requiring maximum generation accuracy or complex multi-step reasoning, cloud-based models are currently the clear winner.

There are power limitations. A Mac Mini, for all its advantages, is not a data center. When multiple users are simultaneously using the AI, response times decrease. If the AI is used by 15-20 people simultaneously, this is generally not a problem. High-load systems will require more powerful hardware or a cluster architecture.

The system requires setup and maintenance. After installation, initial configuration is required to ensure full functionality. Periodic updates of models and components, as well as response quality monitoring, will be required. This is an ongoing process, not a one-time setup.

When business really needs it

The choice of a local AI infrastructure is justified if several conditions are met simultaneously:

- the company has a significant array of internal data (documents, knowledge bases, interaction history);

- the confidentiality of this data is important – due to regulatory requirements, internal policy or common sense;

- there are repetitive processes that AI can automate or speed up;

- The company is ready for engineering implementation, rather than looking for a ready-made turnkey product without adaptation.

If this describes you, then a local AI infrastructure is not only possible, it’s advisable.

Result

Local AI on Apple Silicon is a viable and practical approach already being used by companies that value data control and cost predictability.

The Mac Mini M4 Pro delivers ample power, the open-source models offer acceptable quality, and the local deployment toolset is mature and stable.

The main task is to properly design the architecture: configure the context layer and RAG, develop skills for specific business tasks, launch agents, and ensure reliable system operation. Errors at this level lead to inaccurate responses, scalability, and integration issues, so it's important to think through the structure from the very beginning.

We help companies design and launch on-premises AI systems, from architecture selection to a working solution. If you'd like to discuss your project, please contact us.