AI / ML QA Engineer

What does an AI/ML QA Engineer do?

An AI system that hasn't undergone thorough testing poses a risk to business. A language model can reliably produce incorrect information, a recommender system can produce irrelevant results, and a classifier can systematically make mistakes on certain data segments.

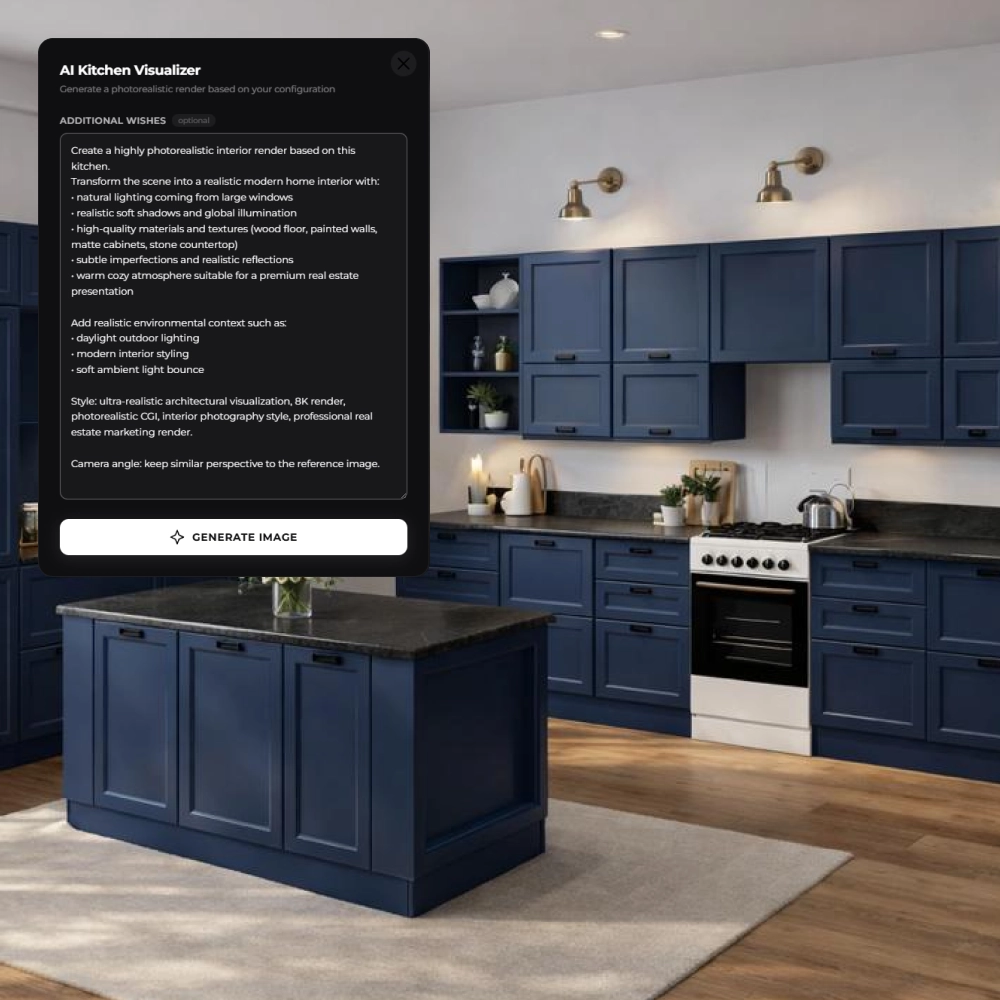

An AI/ML QA Engineer is a specialist who ensures the mitigation of such errors in AI projects: testing the accuracy and robustness of models, identifying hallucinations, checking data quality, and evaluating system performance under load. Their job is to ensure that the AI system behaves predictably and correctly not only in the test environment but also in real-world operating conditions.

In the AI system development chain, the QA Engineer is the last one before launch: they accept what the AI Developer has built, trained the ML Engineer, prepared the Data Engineer, and labeled the Data Annotator, and provide the final answer to the question of whether the system is ready for launch for real users.

Choose a developer

What does the AI/ML QA Engineer's job look like?

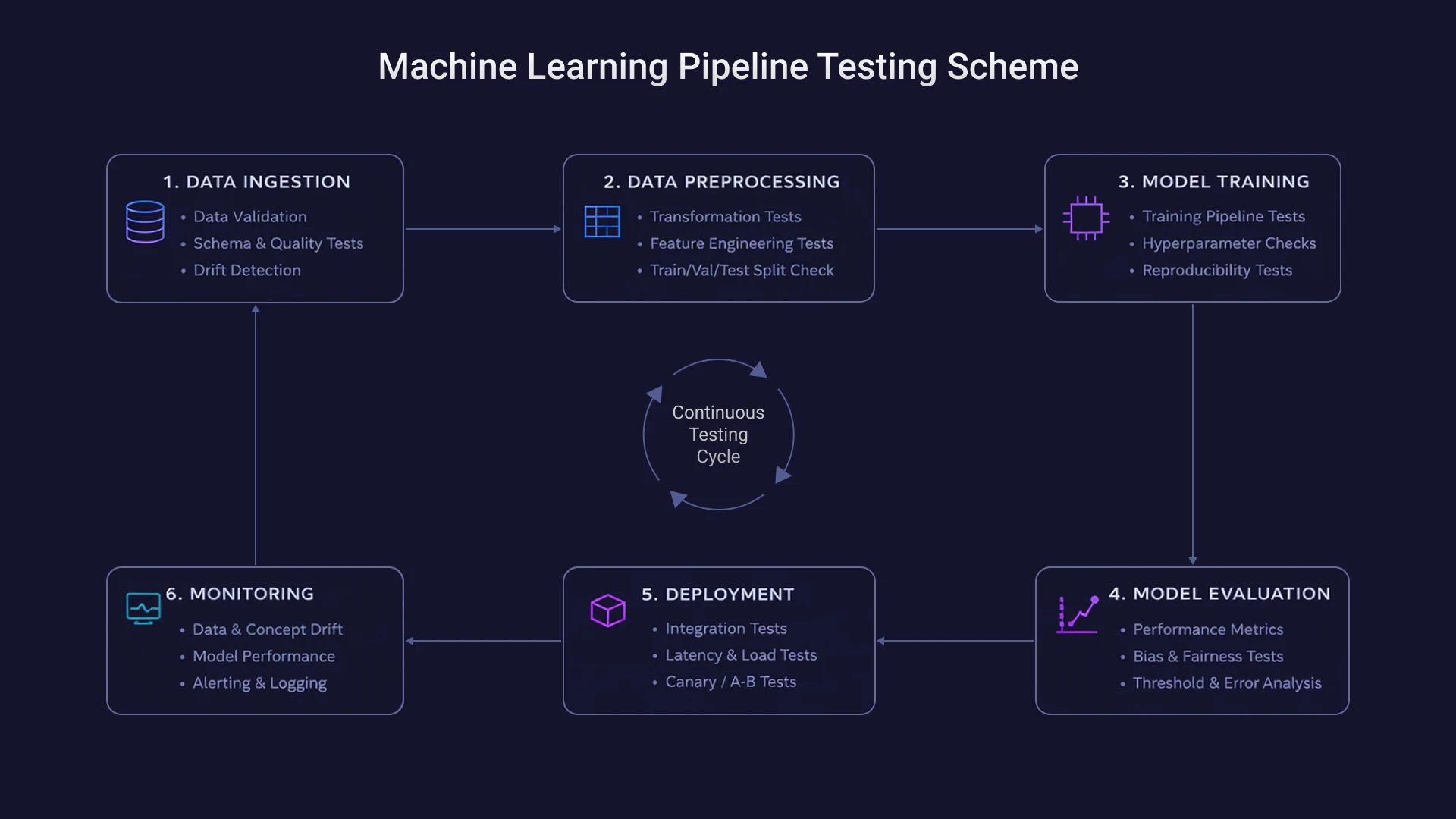

QA in AI projects is not a one-time check before release, but a consistent process that covers data, models, applications, and their behavior in production.

QA Planning. At the outset, the AI/ML QA Engineer defines test scenarios, creates evaluation datasets, and agrees on quality criteria with the team. The depth of subsequent testing depends on how accurately requirements are formulated at this stage.

Dataset QA. Before testing a model, it's important to ensure the quality of the data it was trained on. An AI/ML QA Engineer checks datasets for labeling errors, identifies bias, and assesses the representativeness of samples.

Machine Learning (ML) Model Testing (Model QA). An AI/ML QA Engineer verifies the model's behavior on test samples, analyzes key metrics—accuracy, recall, and precision—and identifies scenarios where the model fails.

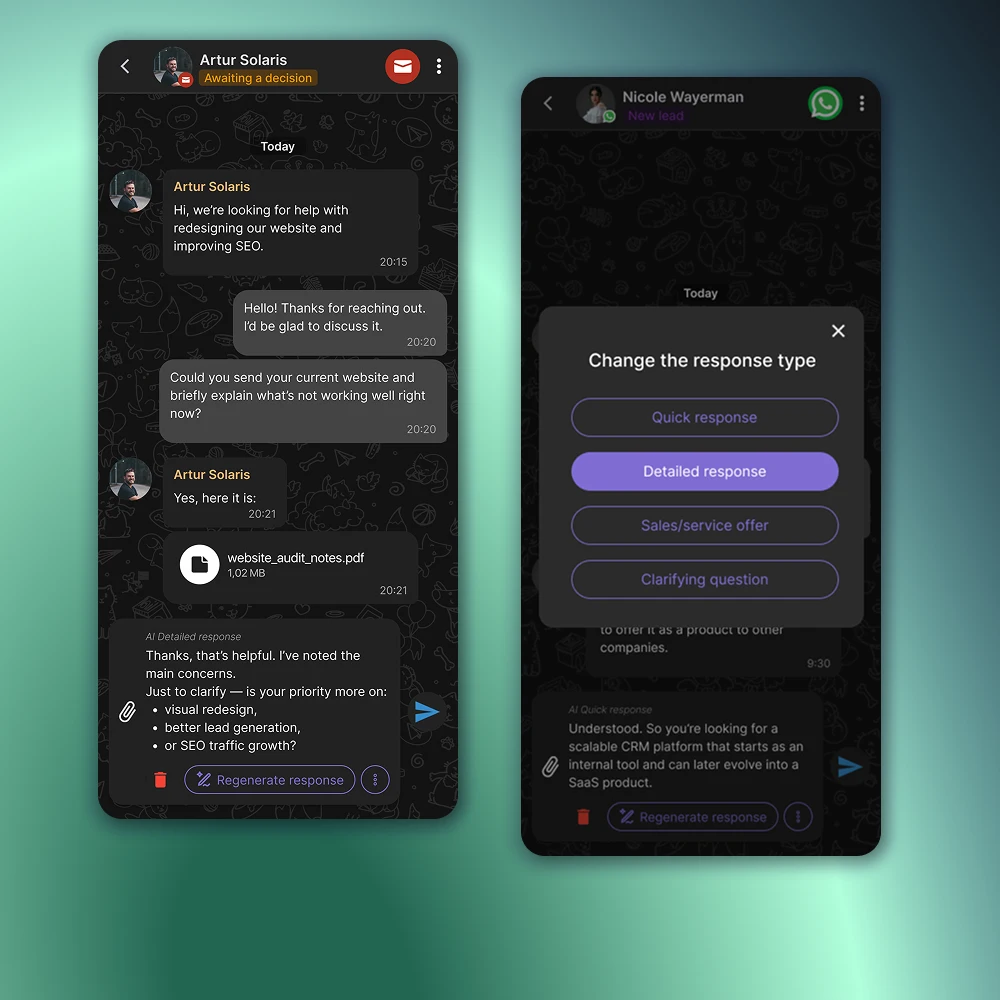

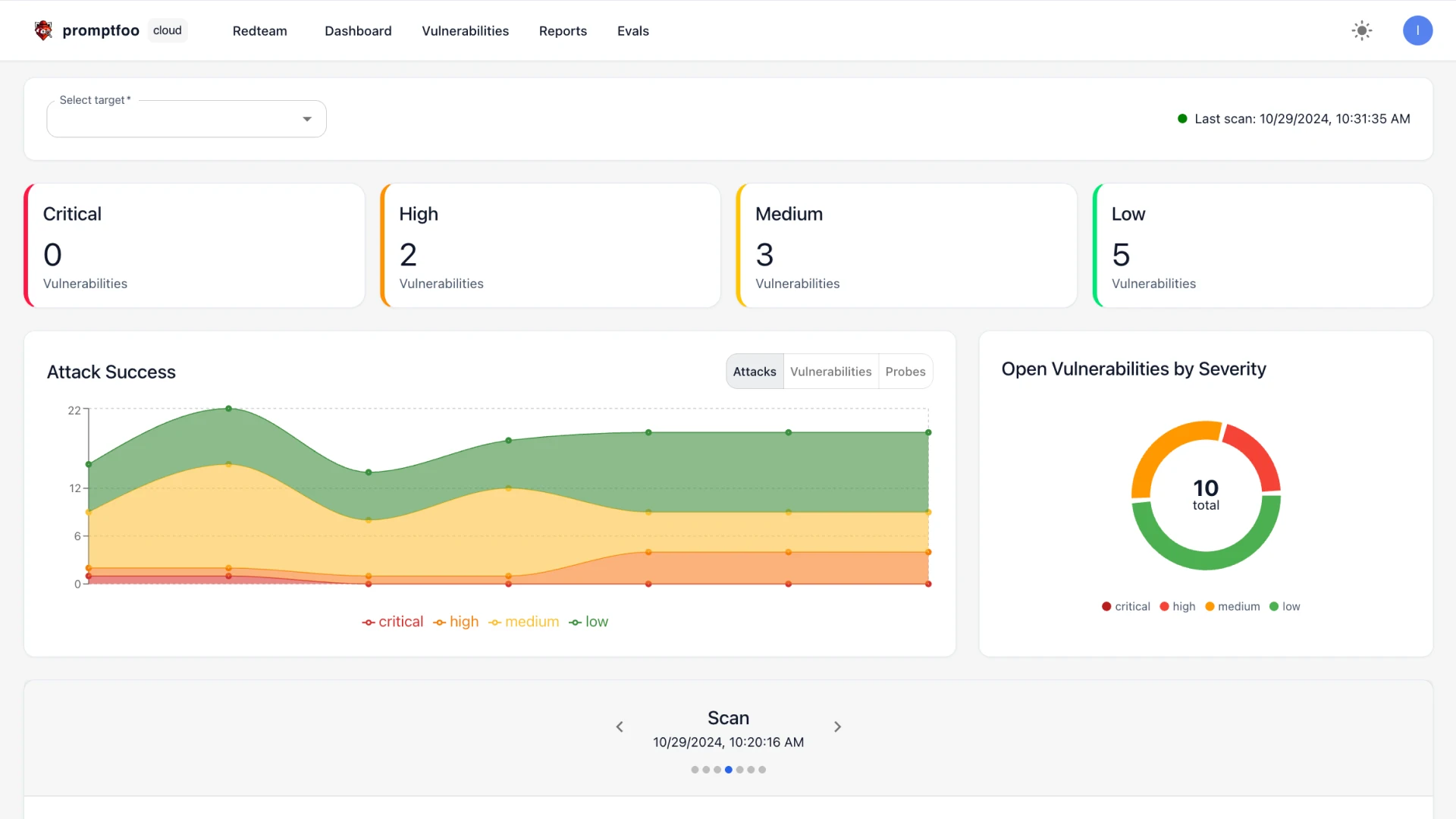

LLM application testing (LLM QA). Language models require a separate approach: an AI/ML QA Engineer verifies the correctness of responses, tests prompt robustness, and identifies hallucinations—instances where the model reliably generates factually incorrect information.

RAG system testing (RAG QA). In retrieval-augmented generation systems, the quality of the response depends not only on the model but also on the relevance of the documents it retrieves. An AI/ML QA Engineer tests the search component and verifies that the sources match the query context.

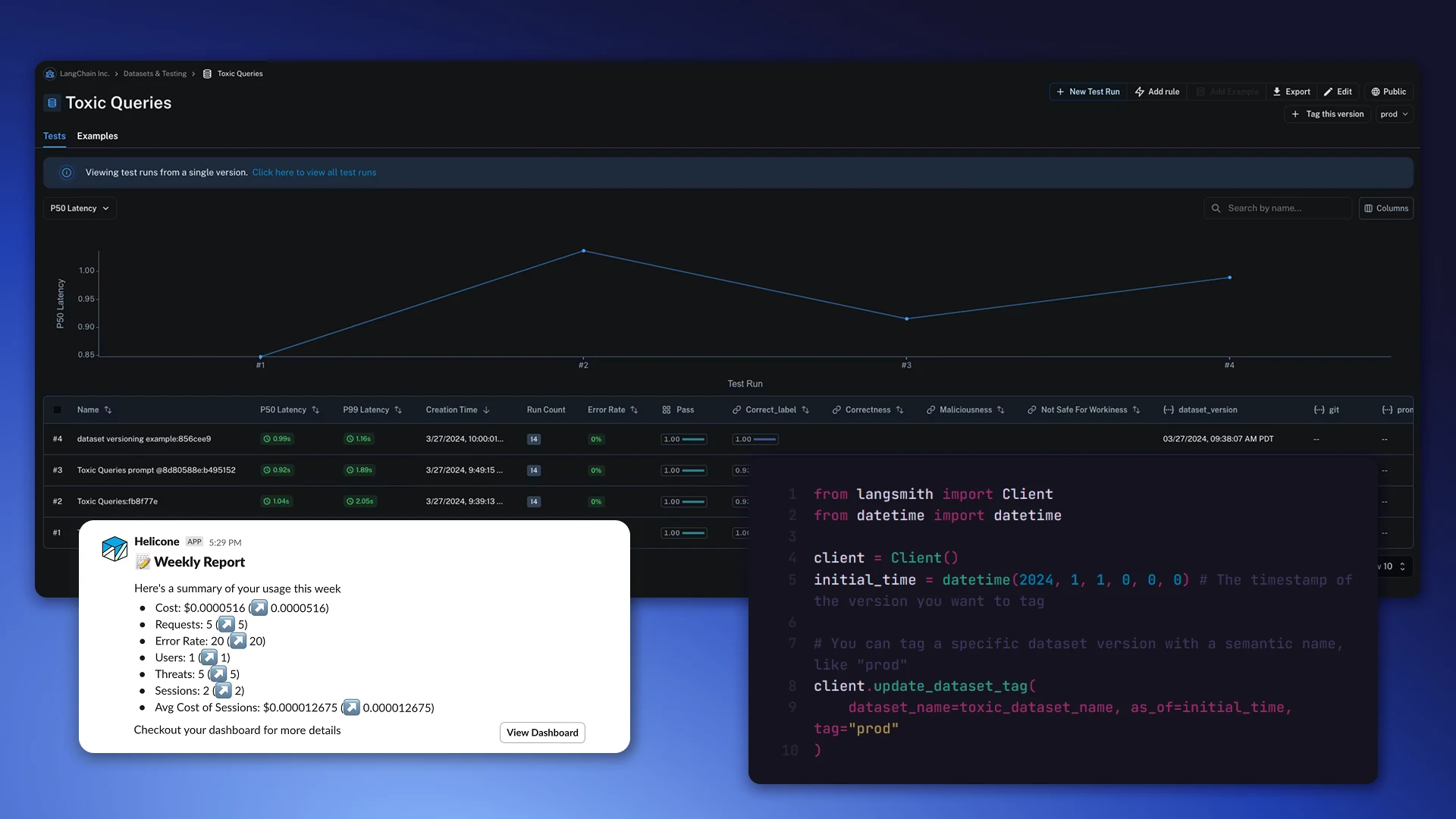

Load testing (Performance QA). An AI/ML QA Engineer checks latency—the system's response time—and tests its behavior under peak load to ensure performance remains within acceptable limits under real-world traffic.

Production Monitoring. After launch, the AI/ML QA Engineer analyzes logs, monitors for anomalies in model behavior, and identifies quality degradation as input data changes.

AI/ML QA Engineer Tools

The AI/ML QA Engineer stack covers tools for model testing, monitoring, and automated checks.

LLM testing: LangSmith, Promptfoo, DeepEval – tools for assessing the quality of language model responses, testing prompts, and detecting hallucinations.

AI System Monitoring: Langfuse and Helicone provide observability for LLM applications in production, including request tracking, latency analysis, and anomaly detection.

ML model evaluation: MLflow and Weights & Biases are used to track experiments, compare metrics, and control model versions.

QA automation: Playwright, Selenium, and Cypress are used for end-to-end testing of AI application interfaces, Postman is used for checking application programming interfaces (APIs).

AI System Quality Metrics

The quality of an AI system is determined by a set of metrics, each of which is responsible for its own aspect of the model's behavior.

Accuracy shows the proportion of correct model responses on the test sample and gives a general idea of its accuracy.

Precision and Recall are used where not just accuracy is important, but the balance between false positives and missed errors – for example, in classification tasks.

Hallucination rate – the proportion of responses in which the model generates factually incorrect information with a high degree of confidence. This is especially critical for LLM applications in medicine, law, and finance.

Response relevance evaluates how well a model's response matches the context and user intent, rather than simply being grammatically correct.

Latency is the system response time, which directly impacts user experience and product scalability.

The error rate measures the frequency of crashes and incorrect responses in production and serves as a key indicator of system stability.

Where is AI/ML QA applied?

Any AI or ML system that interacts with users or is involved in business decision making requires a separate testing process.

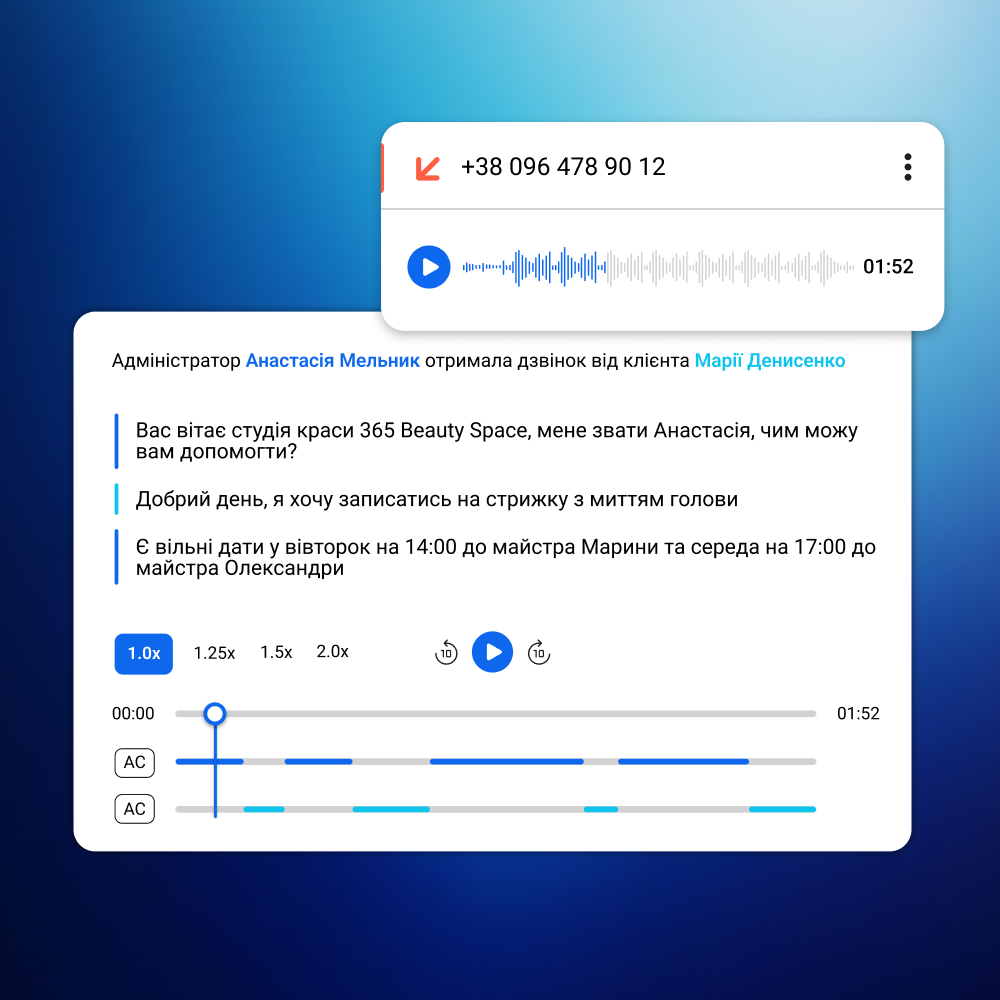

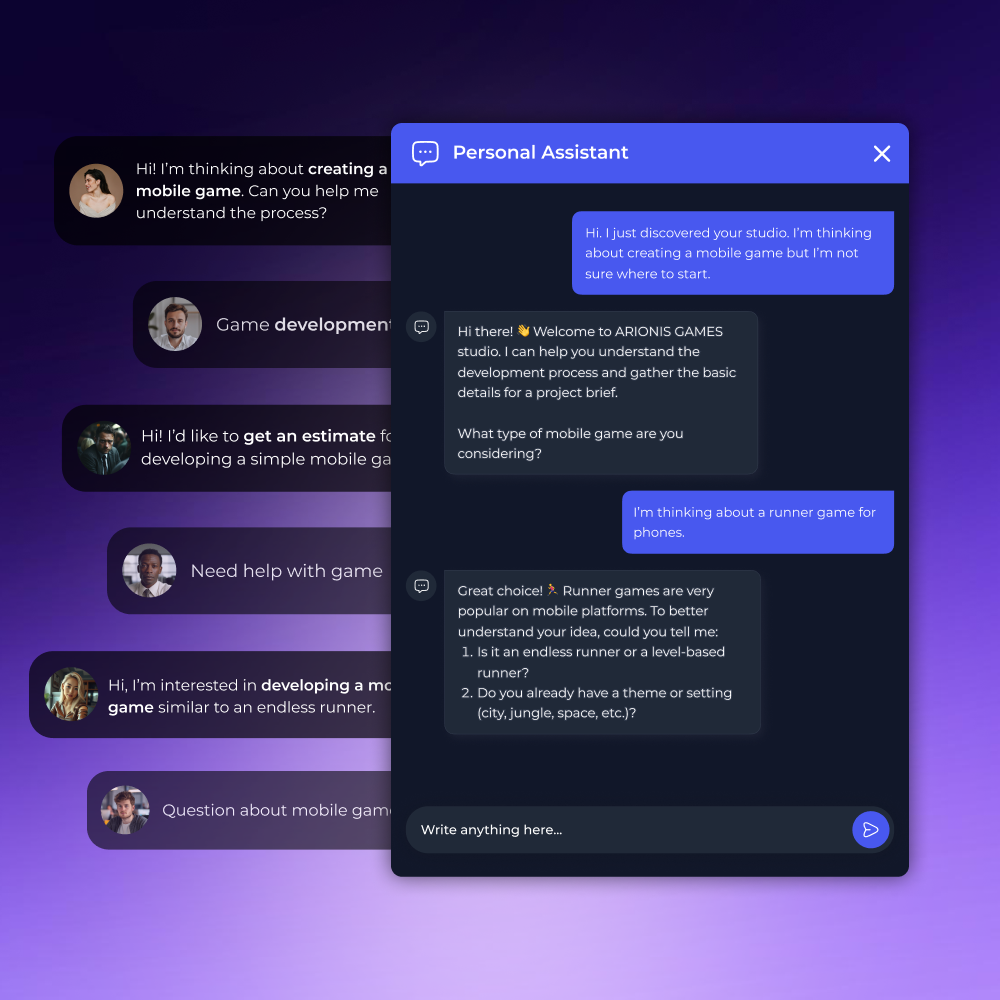

AI chatbots and assistants. Imagine a corporate assistant answering employee questions about internal company policies, or a customer service bot at a bank providing product consultations. Such systems must provide accurate responses in any dialogue scenario, including provocative and non-standard queries. An AI/ML QA Engineer checks the model's robustness, identifies hallucinations, and tests edge cases before the system is deployed to users.

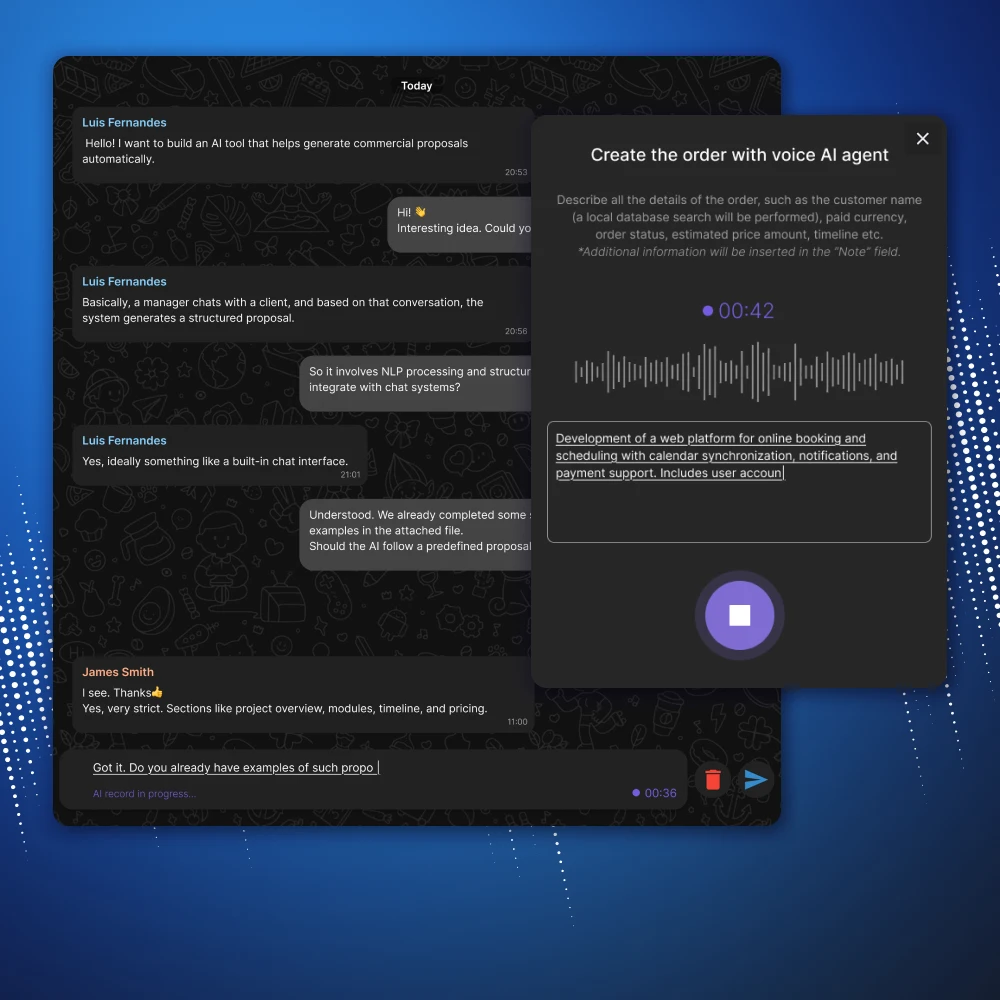

RAG systems. Companies are increasingly building internal knowledge bases based on LLM: an employee asks a question, and the system finds the necessary documents and formulates an answer. The quality of this solution depends not only on how the model generates text but also on whether it finds the correct documents. An AI/ML QA Engineer tests both components and verifies that the sources match the context of the request.

Recommender systems. A streaming service recommends movies, a marketplace recommends products, and an HR platform recommends job openings. An AI/ML QA Engineer verifies that the recommendations are relevant, bias-free, and consistent with the product's business logic.

Computer vision solutions. Models that recognize manufacturing defects, verify documents, or analyze medical images are tested for classification accuracy and resilience to changing conditions, such as lighting, viewing angle, and image quality.

Why hire an AI/ML QA Engineer at CortexIntellect

Specializing in AI, not adapting classic QA. Our specialists work specifically with AI systems—they don't transfer standard QA practices to ML products, but rather use approaches developed for the specifics of nondeterministic models.

Understanding the entire AI stack. A QA Engineer who doesn't understand how a model is structured and what data it was trained on is testing blind. Our specialists work in tandem with the ML Engineer and Data Engineer and know where to look for problems at every level of the system.

Experience across various scales and industries. Our specialists have tested AI systems from MVP products to enterprise solutions with millions of users – in fintech, medical technology, e-commerce, and SaaS – and understand how quality requirements vary depending on context and workload.

Flexible connection format. One-time testing before release, support at a specific stage, or long-term maintenance – the format is determined by the task, not a standard service package.

Hire an AI/ML QA Engineer for your project

Contact us – we'll discuss the quality requirements for your AI system, select a specialist for the task, and get you up and running quickly.

FAQ

-

How is AI/ML QA different from regular software testing?

In traditional testing, errors are reproducible: the same input always produces the same output. In AI systems, model behavior is non-deterministic—the result depends on the data, context, and probabilistic nature of the model. An AI/ML QA Engineer works with quality metrics, evaluation samples, and specialized tools that are not available in the standard QA process.

-

At what stage of development should a QA Engineer be involved?

The sooner, the better. Ideally, during the data preparation stage, before model training begins. This allows us to identify dataset quality issues before they impact the results. If the model has already been trained, a QA Engineer can be involved before release—but in this case, some issues will have to be addressed retrospectively.

-

How is the performance of a QA Engineer measured?

Results are recorded in specific metrics: accuracy, hallucination rate, latency, and error rate. Before starting work, we agree on target values for these metrics, and after testing, we provide a report with the results and recommendations.

-

Is it possible to hire a QA Engineer for just one stage – for example, before a release?

Yes. A specialist can be brought in on a case-by-case basis: for LLM QA training before launch, for load testing, or for setting up monitoring in production. The format of the collaboration is discussed based on the specific task.

-

What happens after the system is launched in production?

The AI system's behavior changes as input data and user scenarios change. A QA Engineer sets up monitoring that tracks key metrics in real time and alerts users to quality degradation before it becomes noticeable to users.