Data Engineer

What does a Data Engineer do?

Every day, thousands of new records are created in digital products: users click, place orders, send requests, systems write logs – all of this ends up in databases, files, and message queues and provides no benefit until there is a system that collects this data, cleans it, and delivers it where it is needed.

This is what a Data Engineer does: they develop automated data pipelines to collect, transform, and store data from disparate sources and make it available to analytics systems, machine learning models (ML models), and AI applications.

In the AI systems development chain, the Data Engineer is at the forefront: they collect and prepare data, which is then annotated by the Data Annotator, used by the ML Engineer to train models, and by the AI Developer to create finished AI applications. Without the Data Engineer, this chain simply cannot function.

Choose a developer

Data processing pipeline

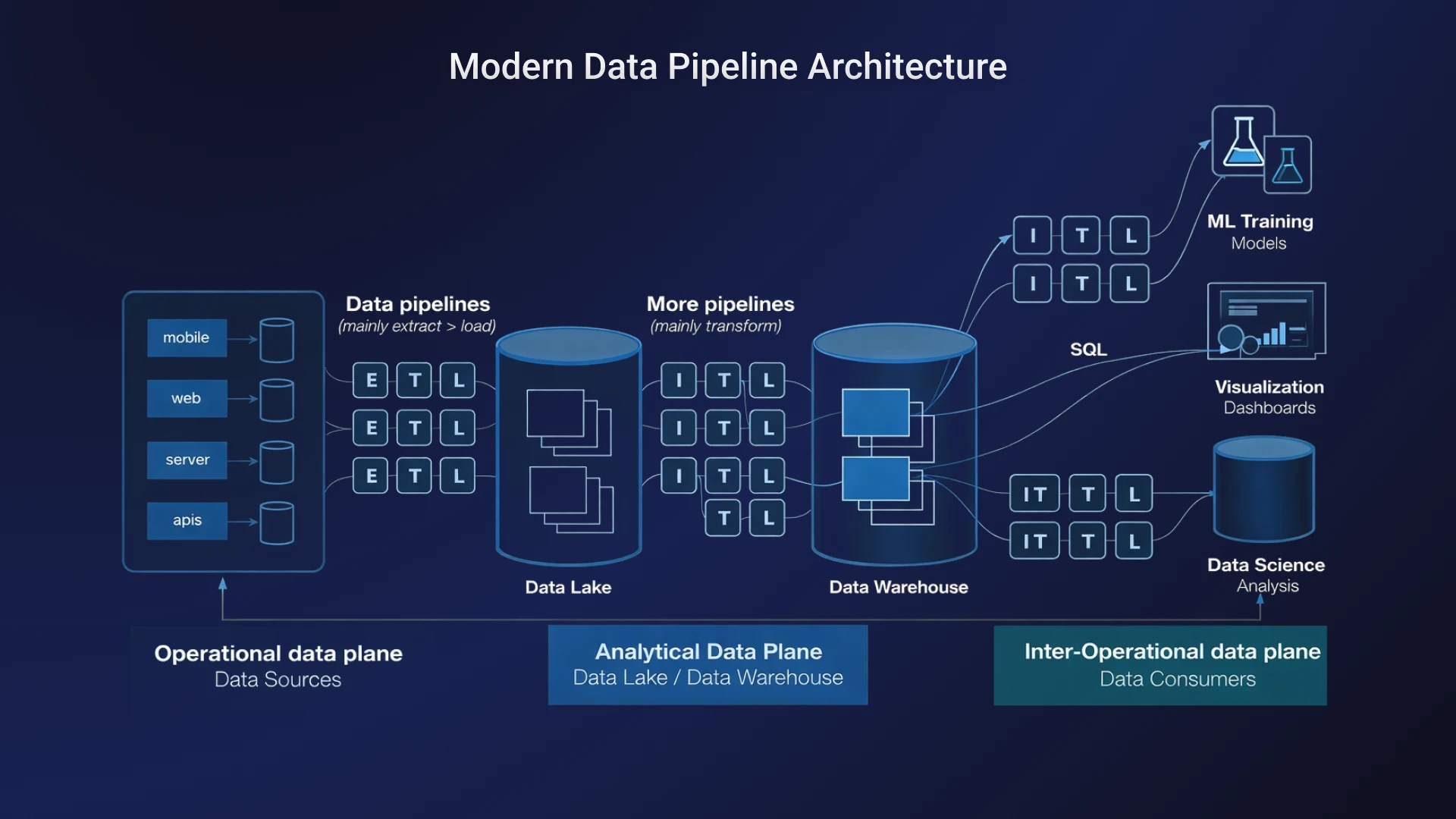

A data engineer works with data at every stage of its lifecycle—from the moment it's generated to when it's ready for use. Here's how this process works.

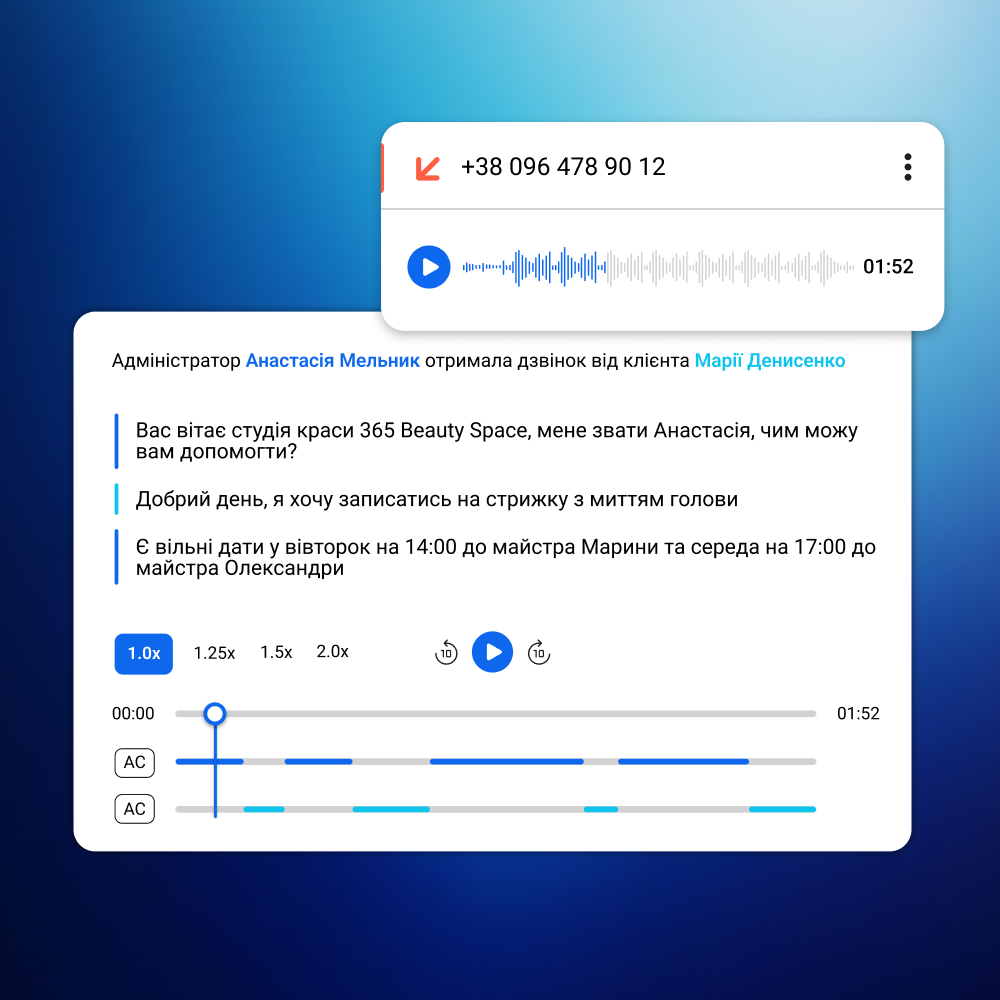

Data Collection. Data rarely resides in a single location: it comes from application programming interfaces (APIs) of external services, relational and non-relational (NoSQL) databases, log systems, and streaming platforms. The Data Engineer's job is to identify all these sources, configure connectors, and ensure regular, lossless collection.

Data Ingestion. Collected data is transferred to the processing system using one of two scenarios: scheduled batch processing, where data is accumulated and processed in portions, or real-time streaming ingest, where each event is processed as it arrives. The data engineer selects the appropriate model based on the project's requirements.

Data Processing. Raw data is almost never suitable for direct use: at this stage, duplicates and errors are removed, formats are standardized, the data is enriched from related sources, and transformed into structures convenient for analysis.

Data Storage. Processed data is stored in storage systems optimized for specific tasks: data lakes are suitable for large volumes of raw and semi-structured data, while data warehouses are suitable for structured data and analytical queries. A data engineer designs storage schemes taking into account access speed, cost, and scalability.

Data Delivery. The final stage is to convert the data into a format suitable for a specific consumer. The Data Engineer creates ready-made data sets, configures the access API, and ensures that the data remains up-to-date.

Key Tasks of a Data Engineer

This is what the Data Engineer's area of responsibility is on a project.

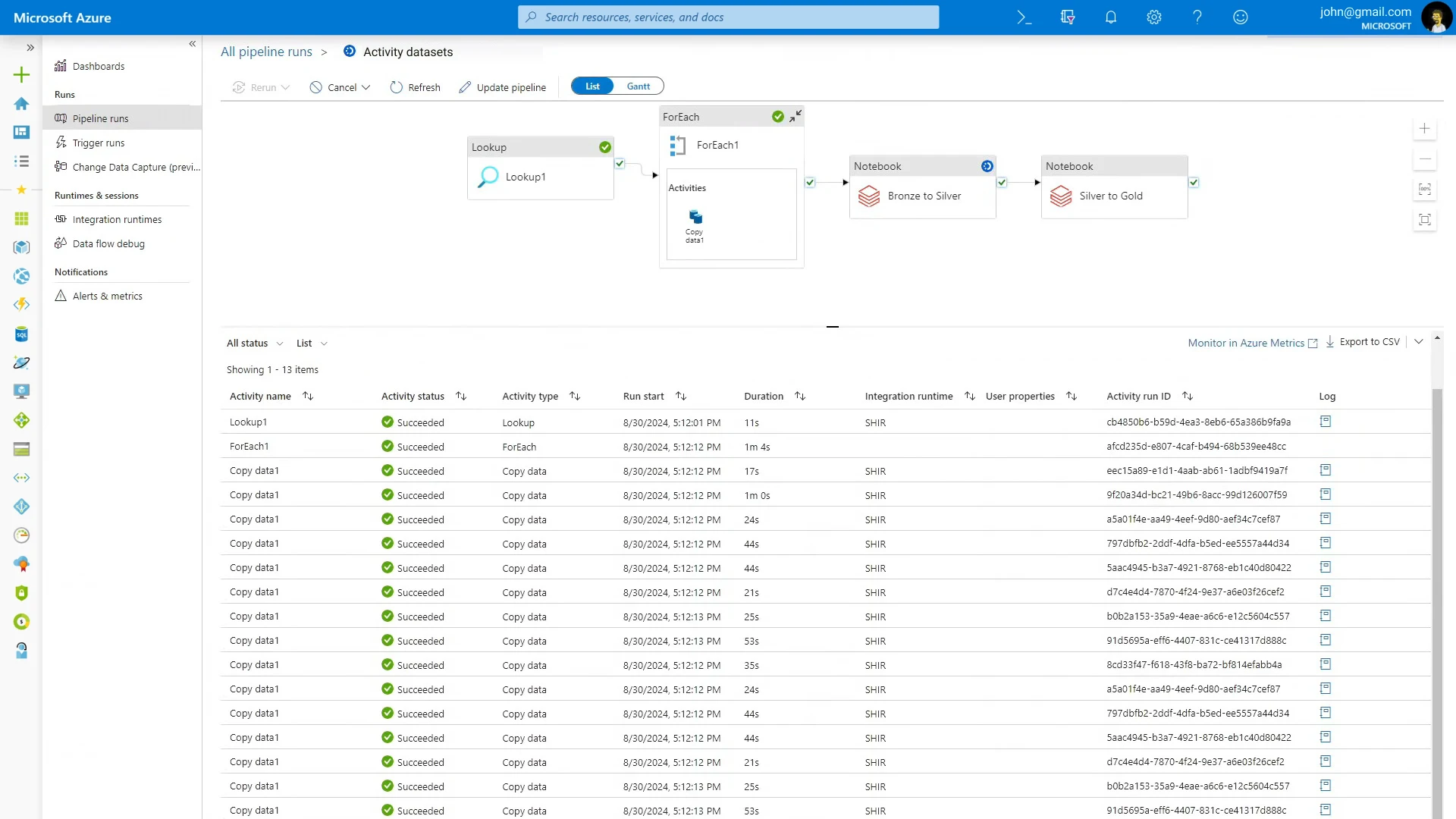

Development of ETL pipelines.

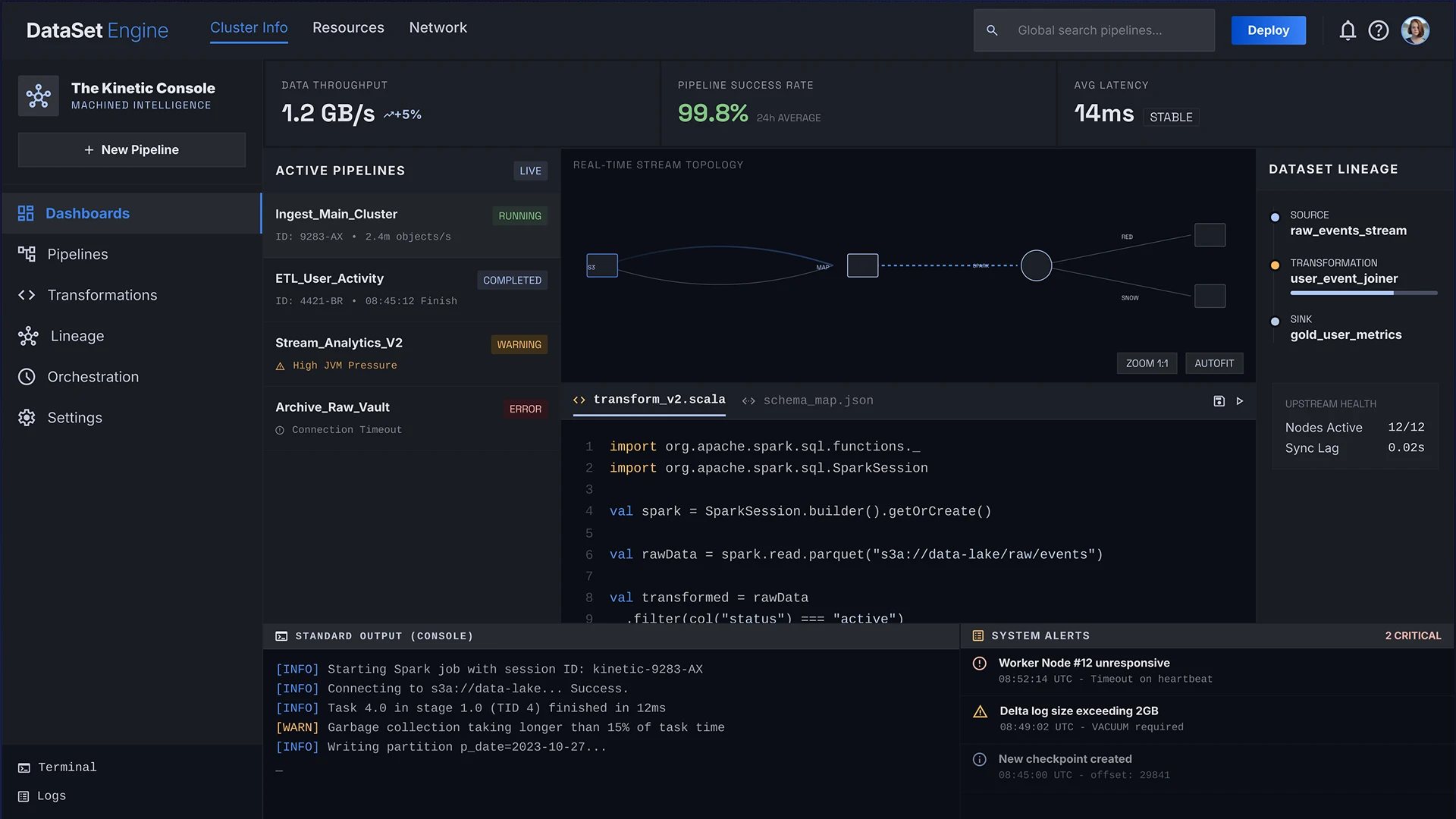

Data Engineer configures automatic data extraction from sources, their transformation according to specified rules, and loading into target storages on a schedule or in real time.

Big data processing.

When it comes to terabytes and petabytes, a Data Engineer designs distributed computing using Apache Spark and PySpark, optimizes queries, and manages cluster resources so that the system can handle the load without losing performance.

Integration of data sources.

Data Engineer connects relational databases (PostgreSQL, MySQL), non-relational storage (NoSQL: MongoDB, Cassandra), external APIs, message queues, and log aggregators to a single stream.

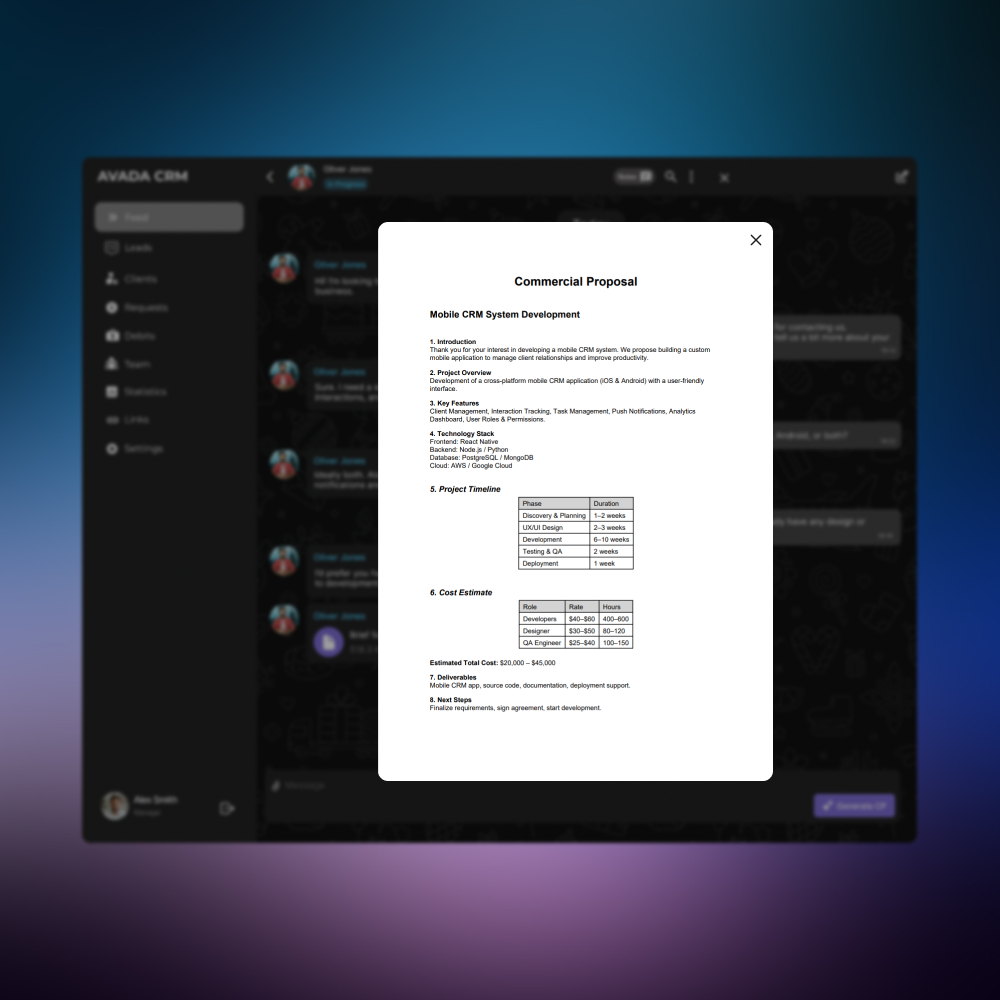

Preparing training datasets.

The Data Engineer creates training samples of the required size and quality, works in conjunction with the Data Annotator, and ensures their regular updating as new data accumulates.

Design of data lakes and data warehouses.

A Data Engineer designs the storage scheme, manages data partitioning, monitors its quality, and configures access rights.

Optimization of data processing.

The Data Engineer analyzes bottlenecks, redesigns schemas, and implements caching and incremental processing where it reduces data delivery time and computing costs.

Technologies a Data Engineer Works With

A data engineer's stack is one of the broadest among technical specialists. Here's what a data engineer typically includes:

Programming languages:

- Python is the main language for working with data;

- SQL – for working with relational storage and analytical queries;

- Java and Scala – for high-load components on Apache Spark.

Data processing tools:

- Apache Spark and PySpark provide distributed processing of large volumes of data;

- Pandas is a flexible tool for transforming data for analytical tasks.

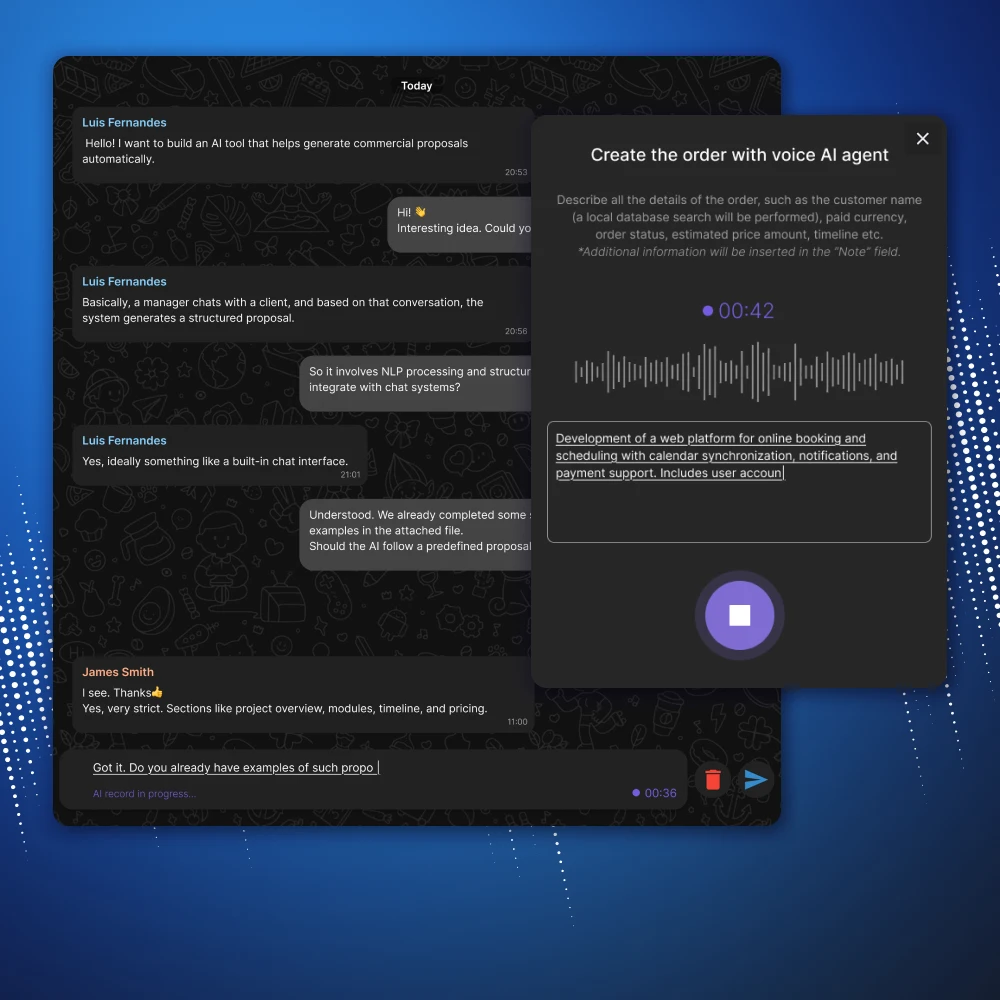

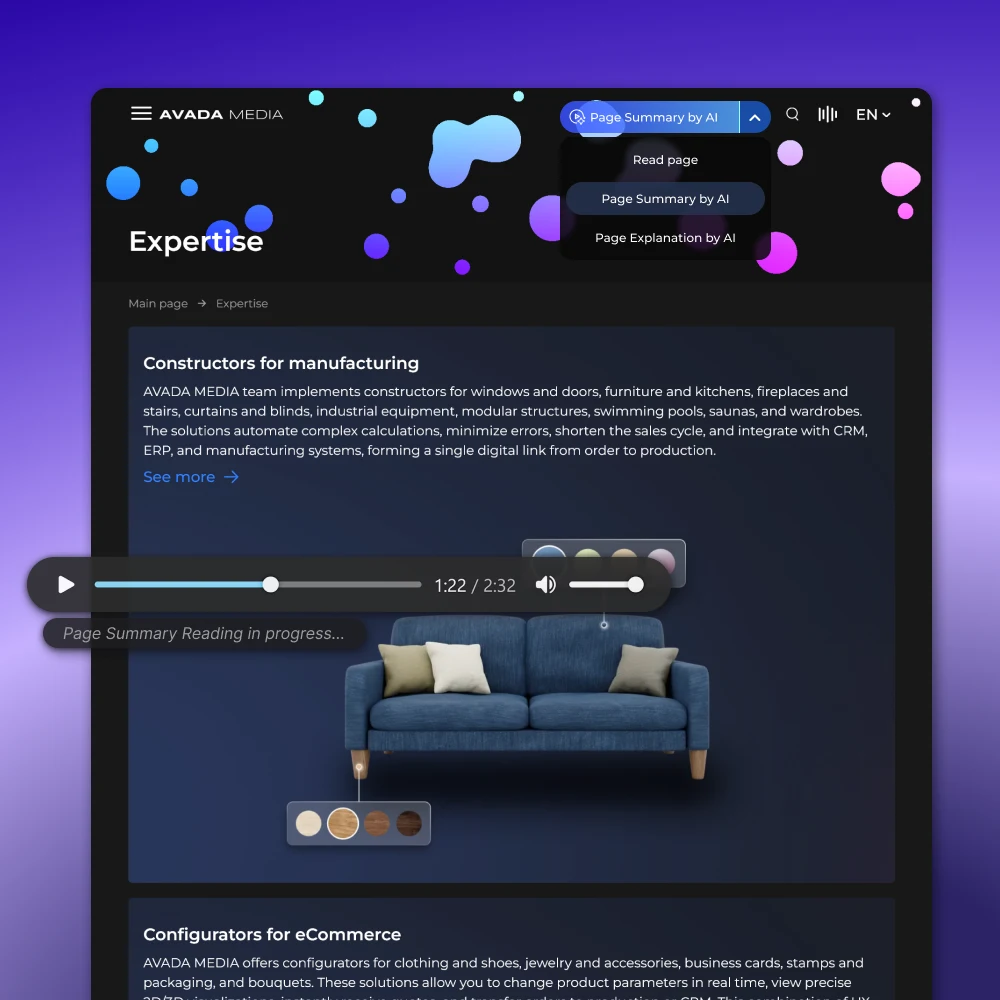

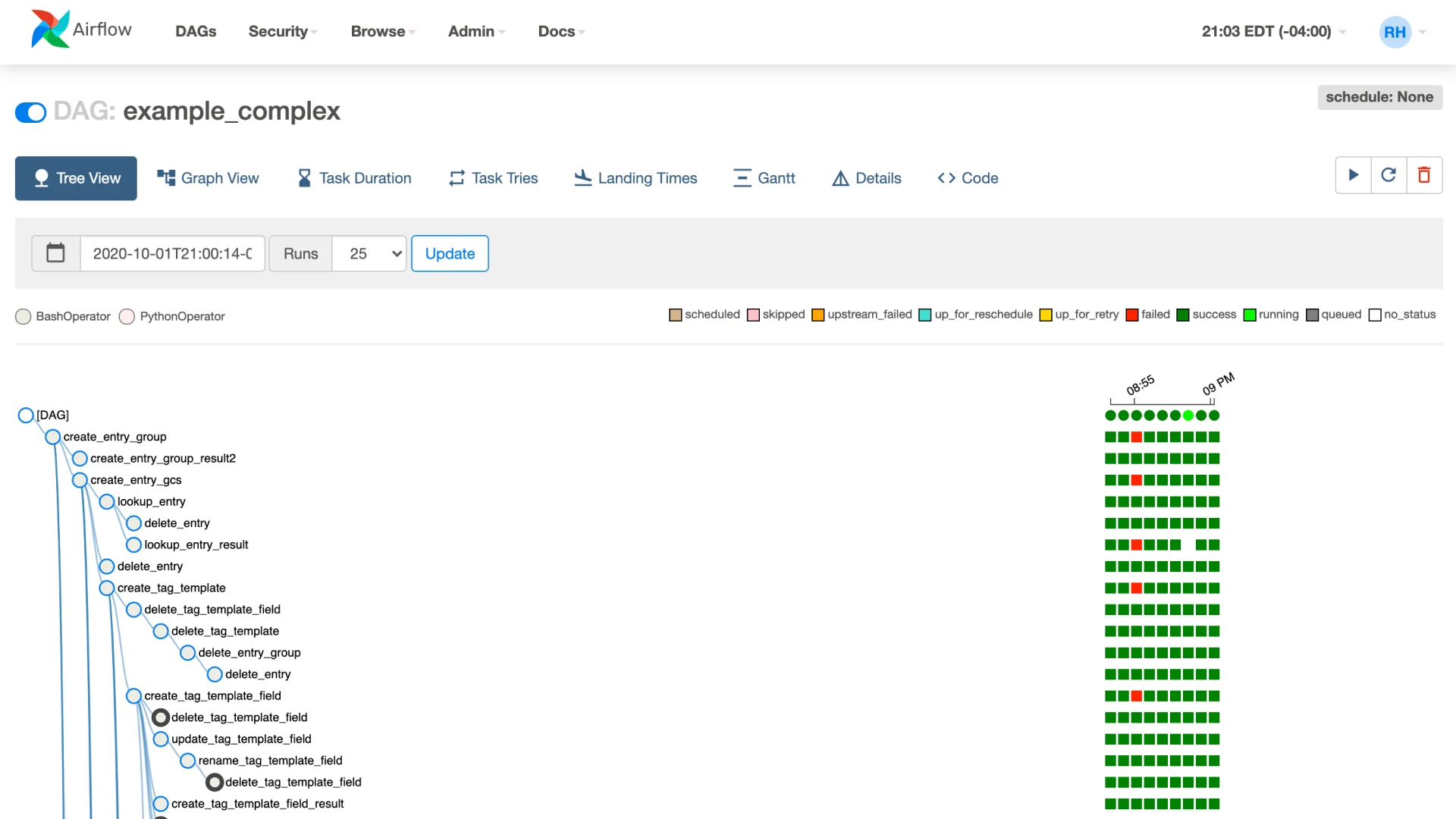

Pipeline orchestration:

Apache Airflow and Prefect allow you to describe dependencies between tasks, manage schedules, and monitor the execution of complex multi-step processes so that a failure in one place doesn't bring down the entire system.

Data streaming:

Apache Kafka provides reliable, real-time event transmission between system components with high throughput and minimal latency.

Data storage:

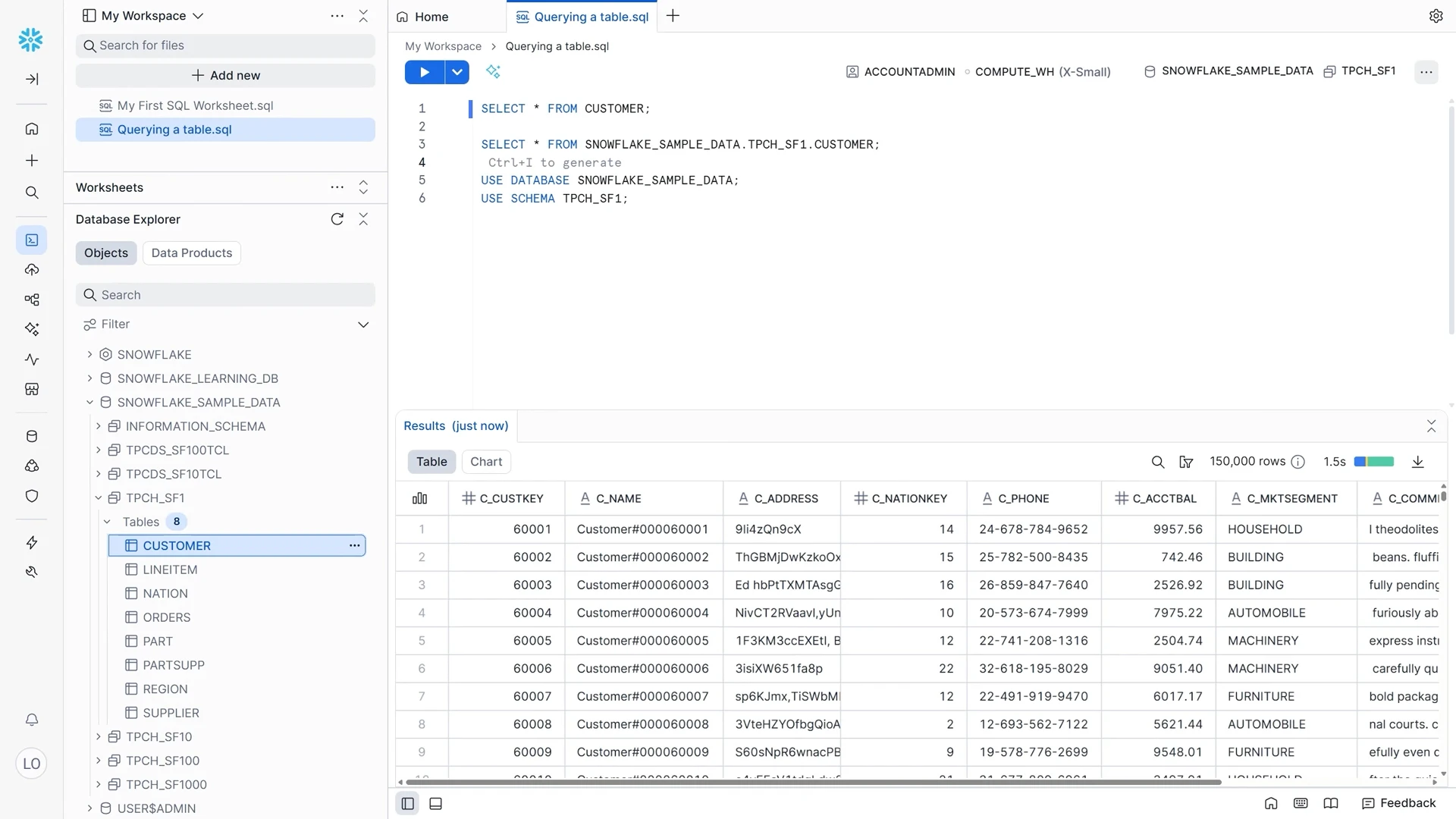

- Snowflake , BigQuery , and Amazon Redshift are cloud data warehouses for scalable analytical queries.

- Data lakes are used to store raw data in its original format – before it is processed and structured.

Where is data engineering applied?

Data engineering underlies most products that work with data on an industrial scale. Here are a few examples of what this looks like in practice.

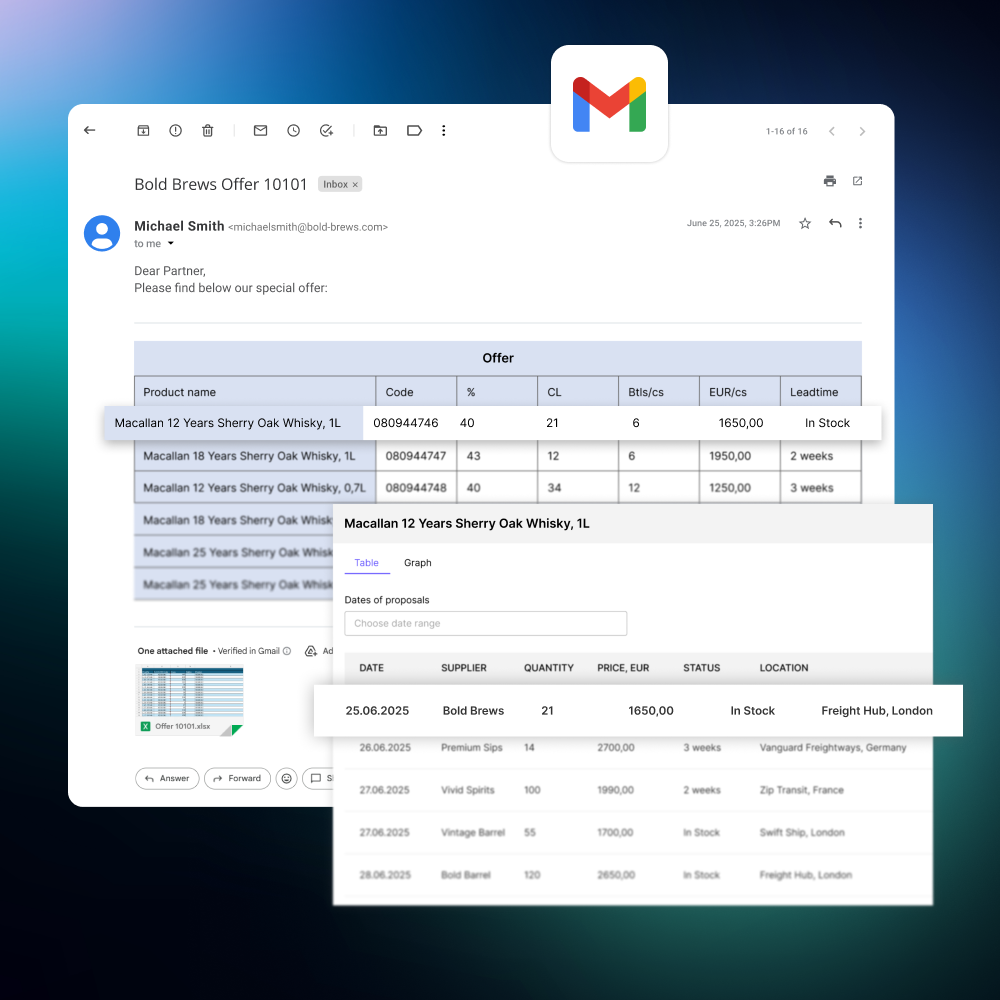

Analytics platforms. Companies like Uber or Airbnb process billions of events per day—sessions, actions, conversions, geolocations. A data engineer builds a pipeline that aggregates this data from multiple touchpoints and delivers it to dashboards in an up-to-date state, without manual exports or delays.

Machine learning. When Spotify trains models for personalized playlists or Tesla for its autopilot, they rely on terabytes of prepared data. A data engineer creates training datasets of the required size, cleans them of noise, and establishes a process for regularly updating the samples as new data arrives.

Recommender systems. Netflix and Amazon base their recommendations on streaming user actions in real time. A data engineer creates streaming pipelines that capture events—views, clicks, purchases—and feed them into the recommendation engine with minimal latency.

Marketing analytics. Large retailers like Zalando or ASOS manage dozens of advertising channels simultaneously. Data Engineer combines data from paid traffic, social media, email, and affiliate programs into a single attribution model and provides a single point of access to all marketing statistics.

Why Hire Data Engineers at CortexIntellect

Experience in data pipeline development. We design both batch and streaming pipelines and select architectures based on specific load and latency requirements.

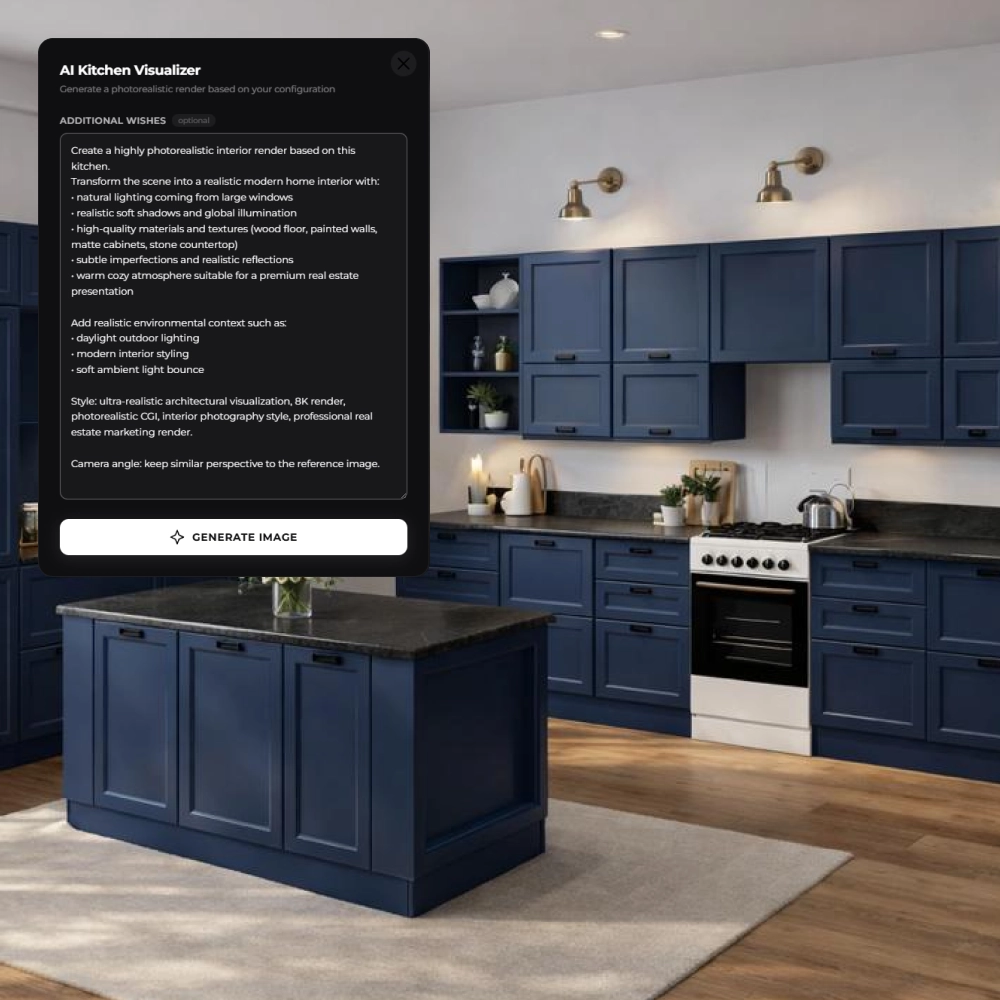

Expertise in AI and ML projects. Our specialists understand what data the model needs and in what format, and prepare it without iterative approvals from the team.

A modern technology stack. Apache Spark, Airflow, Kafka, Snowflake, BigQuery, AWS, GCP, Azure – we work with the tools used by enterprise-level tech companies.

Experience working with big data. We've successfully implemented projects with terabytes of data, and the pipeline must handle peak loads without sacrificing performance.

A flexible collaboration model. A specialist can be engaged for a one-time task, a specific project phase, or long-term data infrastructure support.

Hire a Data Engineer for your project

Contact us – we'll discuss your data architecture, select a specialist for the task, and get you up and running quickly.

FAQ

-

How long does it take to connect a Data Engineer to a project?

We can connect you to a specialist quickly: after an initial consultation, we select an engineer for the task and coordinate the start date. In most cases, work begins within a few days of your request.

-

At what stage of product development is a Data Engineer needed?

The sooner, the better. If a product already accumulates data from multiple sources, but lacks a unified system for collecting and processing it, this is a sign that a Data Engineer is needed now. Putting off hiring until an ML or analytics team is in place is not a good idea: without a ready-made infrastructure, their work simply won't start.

-

What is the difference between a one-time task and a long-term collaboration?

A one-time approach is suitable for a specific outcome: setting up a pipeline, designing a data warehouse, or auditing the existing infrastructure. Long-term support is for companies that want their data infrastructure to evolve alongside the product and not require a separate specialist search for each new request.

-

Will a specialist be able to handle our volume of data?

Our engineers work with terabyte-scale volumes and design systems designed to withstand peak loads. The architecture is tailored to specific project parameters, including data delivery speed, update frequency, and scalability requirements.

-

Do we need to understand the technology stack ourselves to set a task?

No. To get started, it's enough to describe the business problem: where the data comes from, who uses it and how, and what the desired outcome is. The selection of tools and architectural solutions are the engineer's responsibility. This is precisely what the initial consultation is for.