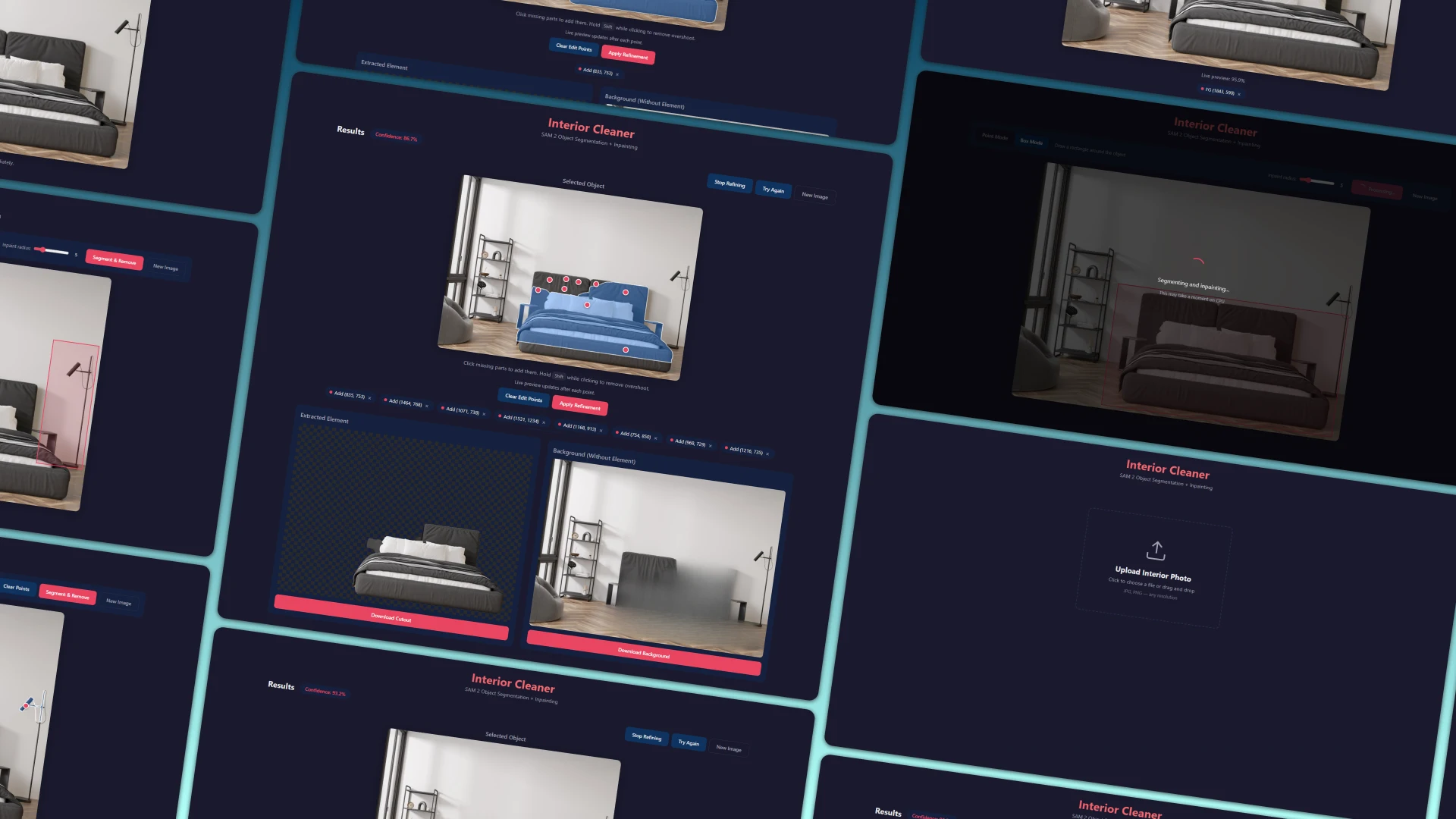

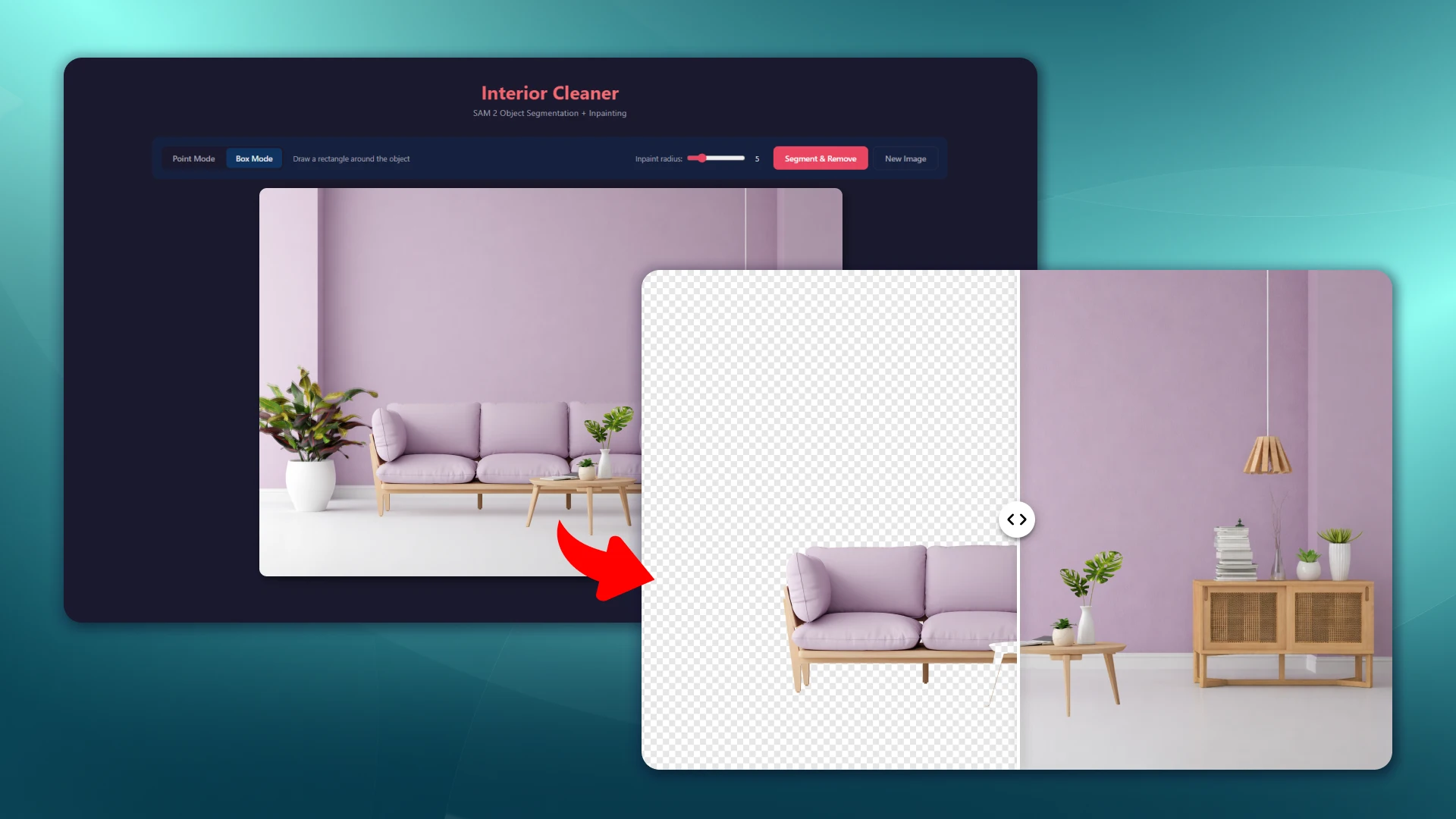

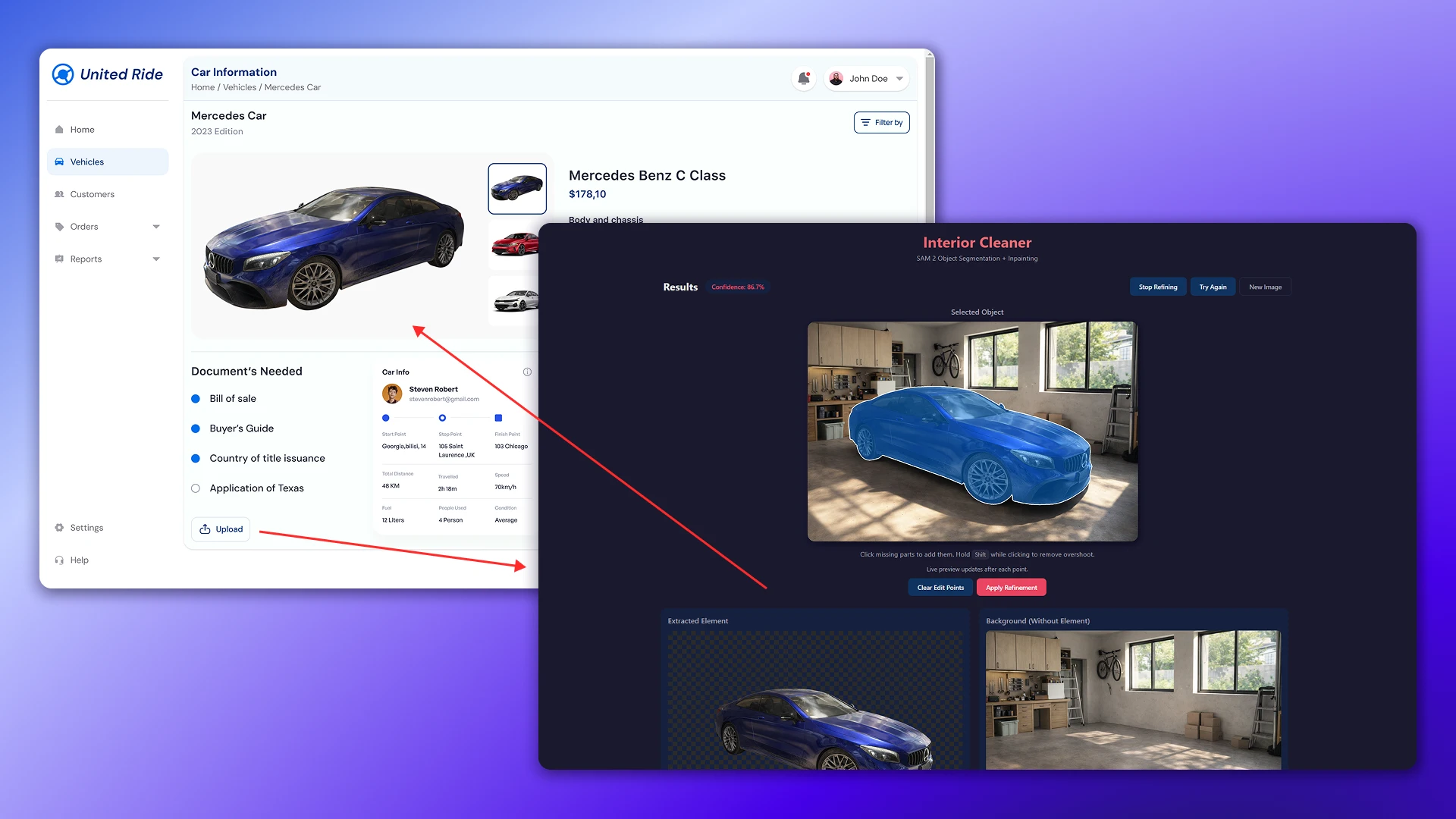

The project developed a web tool for precisely selecting objects in images, then extracting and removing them from the scene. The solution is designed for practical applications: image preparation, content automation, and use in other systems (configurators, catalogs, and generation).

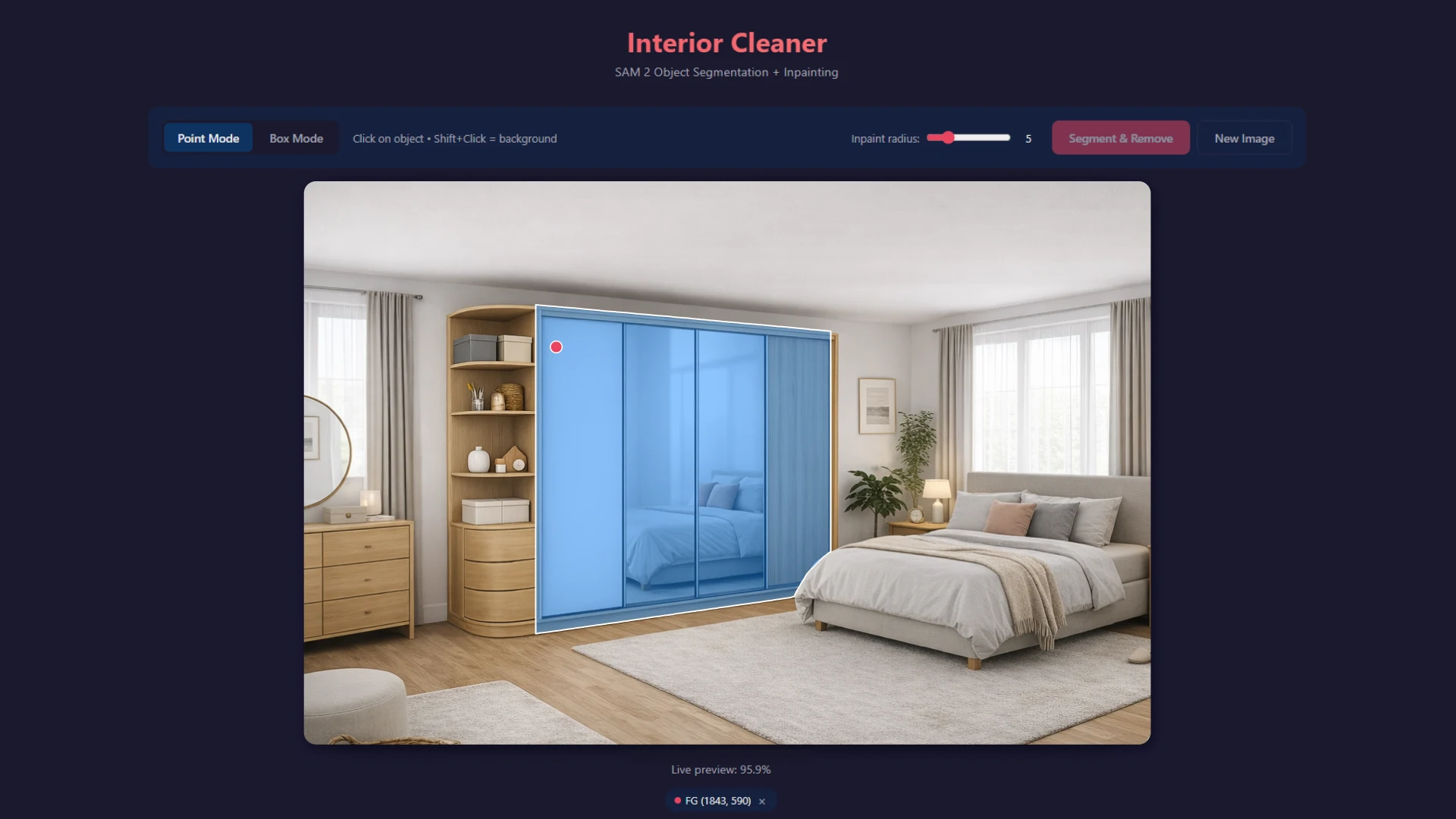

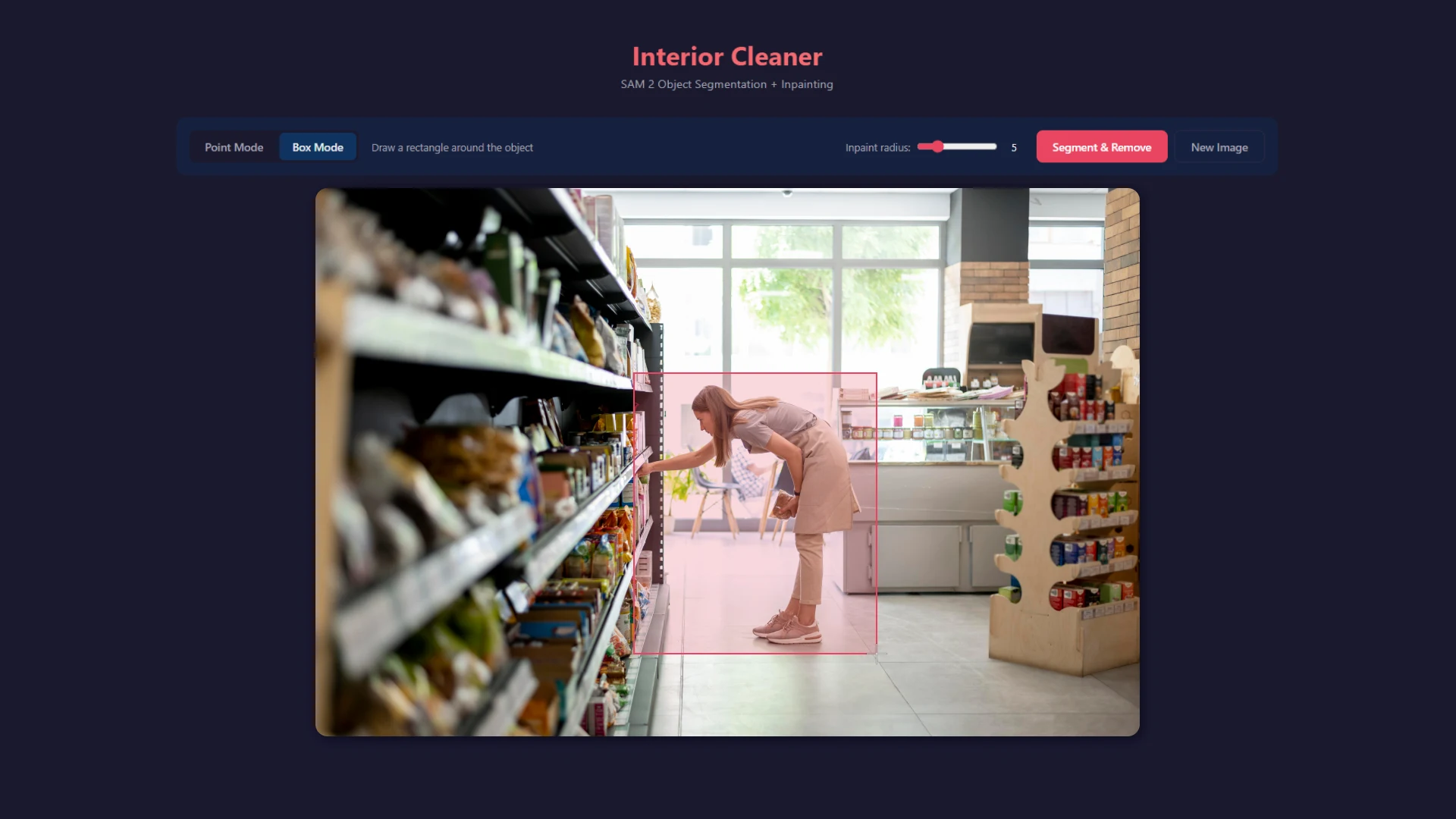

The user performs a single action: selecting an area around an object. The selection can be either rectangular or freeform (using dots).

All further processing – segmentation, mask cleaning, object extraction, and background restoration – occurs automatically on the backend.

Project objective

It was necessary to implement not just “cutting out an object”, but a full cycle of working with the scene:

- precise segmentation without manual marking

- obtaining a pixel-level mask

- extracting an object without losing geometry

- object removal with background restoration

- return of finished results without additional processing

The key requirement is minimal UX (no brushes, no manual correction) while maintaining the accuracy of the result.

Practical application and real-life cases

- In practice, this tool is used not as a standalone editor, but as part of workflows where it is important to work with images quickly and accurately.

- E-commerce (products and catalogs)

Product photo → selection → the system automatically cuts out the object and removes the background.

👉 The output is a finished image for the card without manual processing. - Interiors and real estate

Removing furniture, objects, or visual noise from a scene.

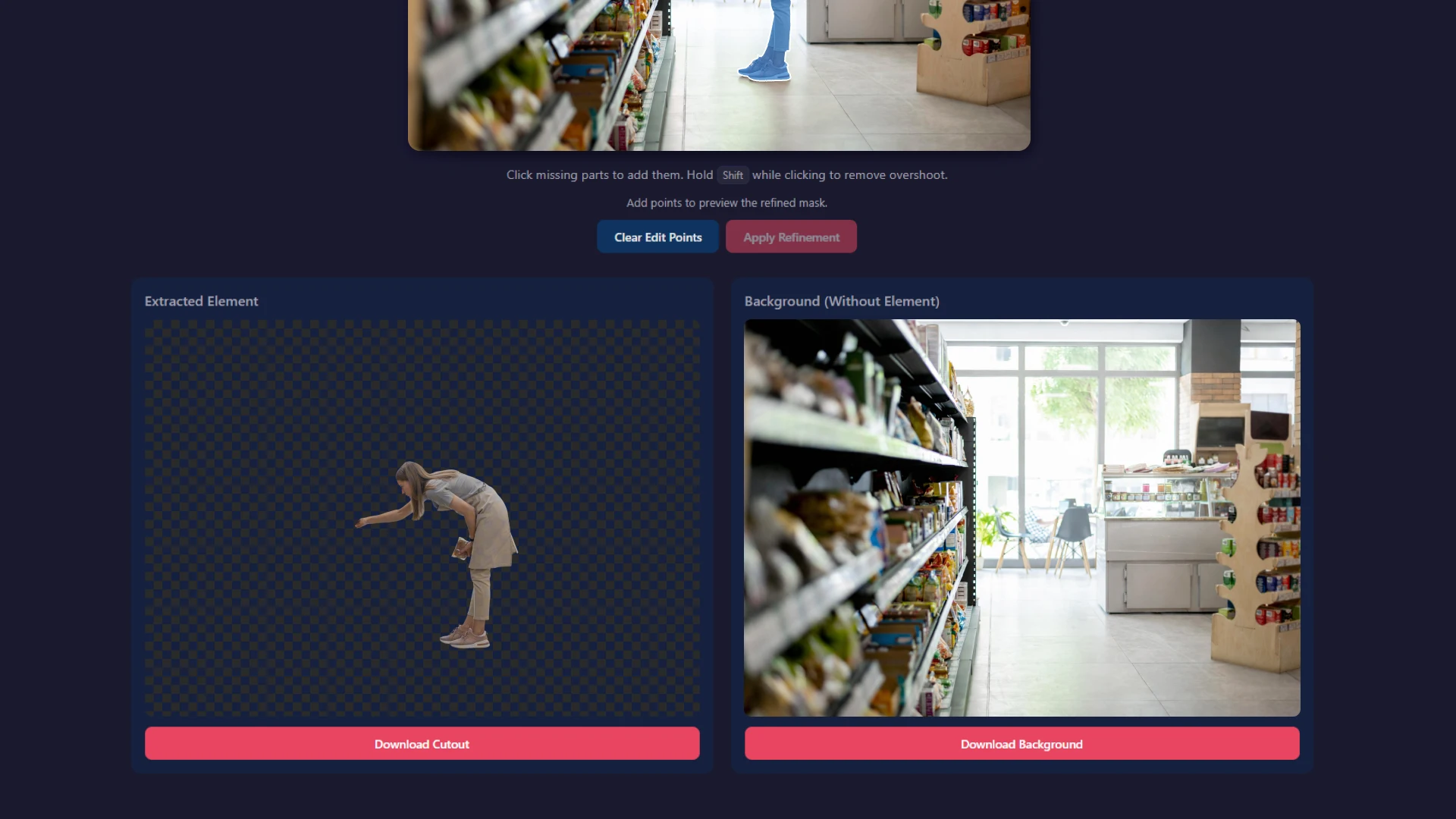

👉 Allows you to display “clean space” and prepare images for sale or visualization. - Removing people from photos

Passers-by, clients, employees, or random people in the frame are automatically removed from photos.

👉 Used for:

— cleaning of interiors and display cases

— preparing photos for websites and catalogues

— marketing materials without “noise” - Configurators (doors, furniture, goods)

The object is selected as a separate layer → then you can change the color, materials, textures.

👉 This is the basis for interactive configurators. - Content and Marketing

The object is extracted and used in various creatives, banners, and landing pages.

👉 Quick visual production without photo shoots. - Bulk image processing

Working with catalogues and large volumes of content.

👉 Significant reduction in time and costs.

UX / user scenario

Flow is simplified as much as possible:

- The user uploads an image

- Selects an object:

— either a rectangle (bounding box)

— or in any arbitrary shape through points - Clicks "Process"

- Gets the result

Why this approach?

- you can quickly select an object using bbox

- if necessary, precisely set the boundaries through points

- suitable for running SAM

- lowers the entry barrier and provides flexibility for complex cases

How the system works

When a user uploads an image and selects an object with a rectangle, the backend does two things:

- the image itself

- coordinates of the selected area

Technically it looks like this:

{

"image": file,

"bbox": [x1, y1, x2, y2]

}

That is, the system does not receive a “ready object,” but simply a hint – where to look for it.

What happens next (pipeline)

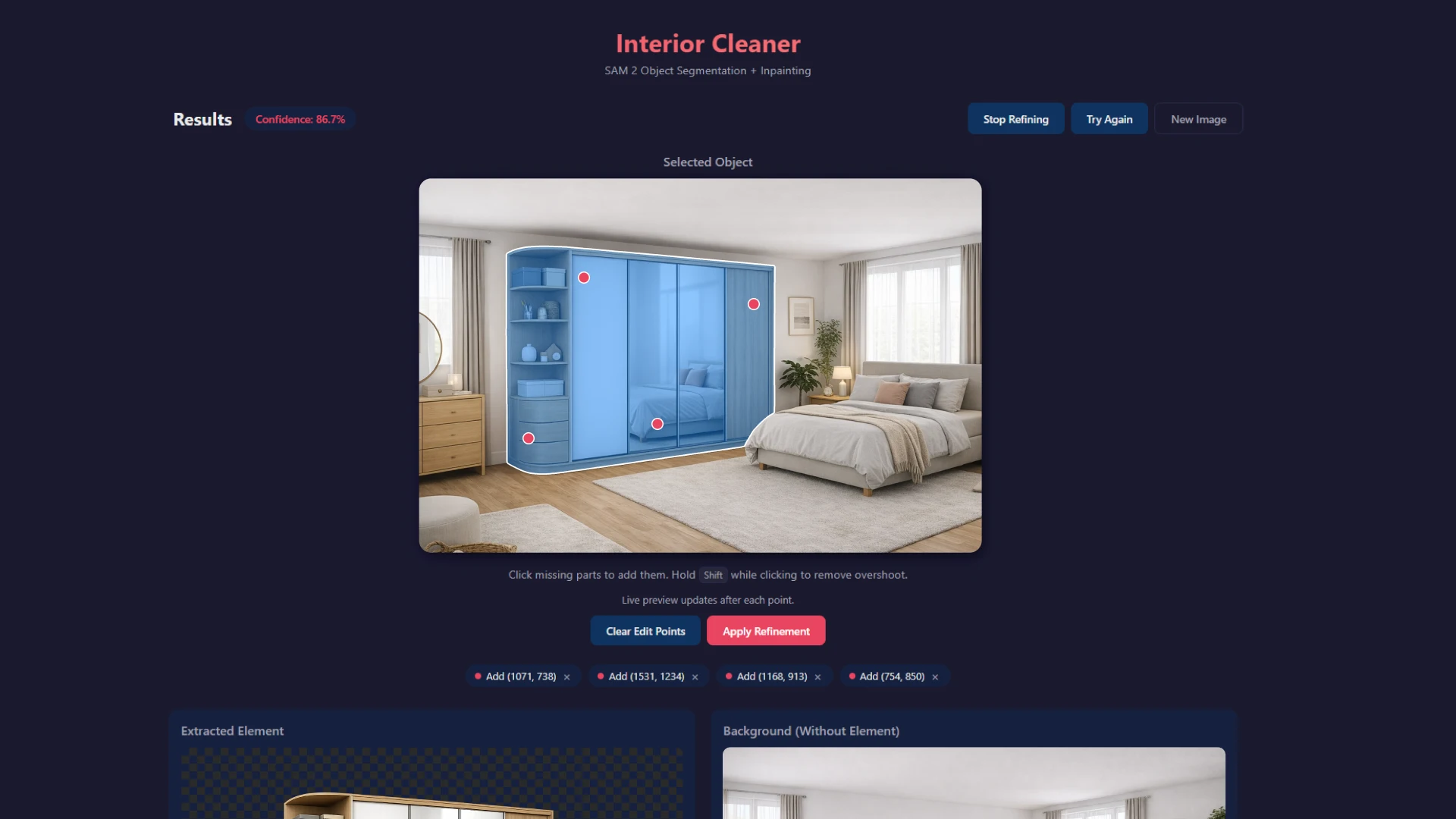

Next, sequential image processing begins. Important: this isn't a single step, but several stages, each of which accomplishes its own task.

1. Image preparation

First, the backend normalizes the data:

- the image is decoded

- is brought to the required format and size

- it is checked that the user's selection is correct

This is necessary so that the model will continue to operate stably and without errors.

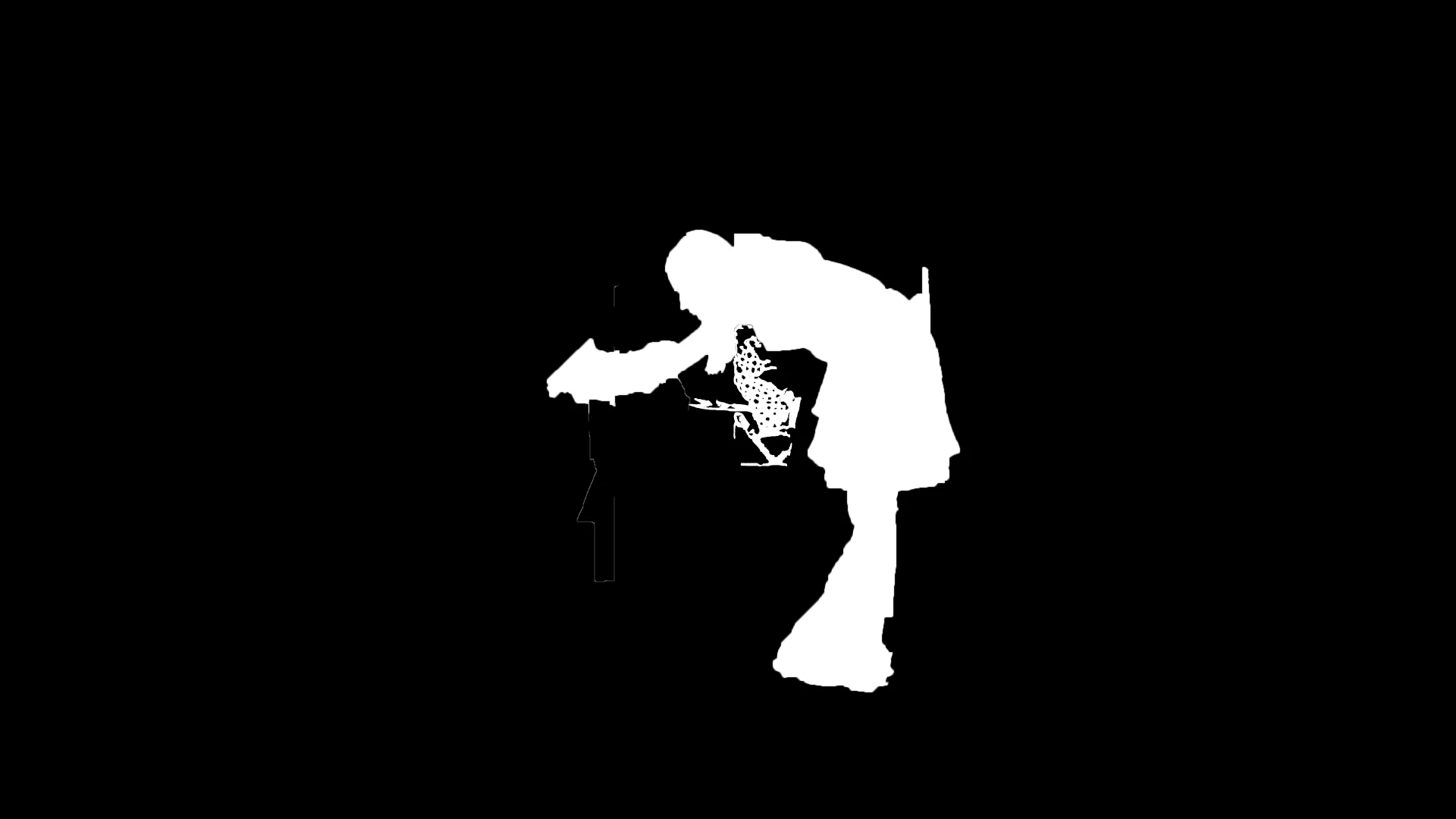

2. Object definition (segmentation)

Next, the SAM 2 model is connected.

What it actually does:

- looks at the area that the user has selected

- "understands" where the real object is inside it

- separates the object from the background

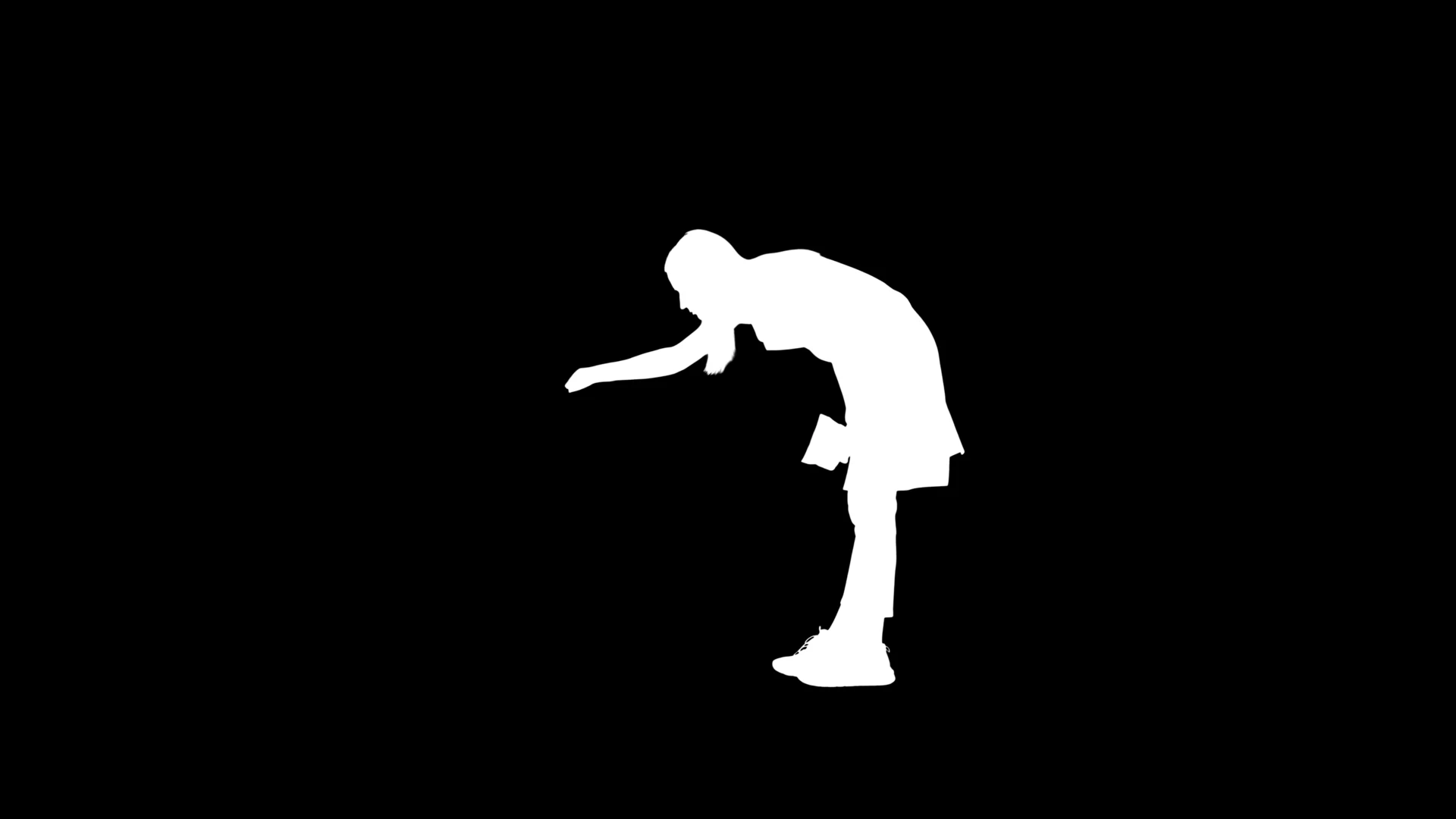

The output is an exact mask, where:

- white is an object

- black is everything else

Important:

This is not just a rectangle, but a precise selection of the shape – taking into account all the curves, details and contours.

3. Cleaning the mask

After the model, the mask is not perfect, so it is refined:

- small "garbage" pixels are removed

- the edges are smoothed out

- it is checked that the object is solid

Why is this necessary:

If you don't do this, the object will have jagged edges or extra pieces of background.

4. Cutting out the object

Now that there is an accurate mask, the object is neatly separated from the image.

Technically it is done like this:

object = image * mask

In practice this means:

- only the object itself remains

- the background becomes transparent

What is important:

- the size of the object does not change

- the proportions are preserved

- the situation doesn't "slide"

The output is a ready-made PNG that can be used immediately.

5. Removing an object from the scene

At the same time, the system performs a second operation – removing the object from the original image.

An "empty space" is created where the object was:

hole = image - mask

But simply deleting it isn't enough. The background needs to be restored.

6. Background restoration (inpainting)

The LaMa model is used for this purpose.

What she does:

- analyzes what is around a distant object

- "completes" the missing area

- restores texture

This works best on:

- walls

- half

- similar textures

As a result, there is no “hole” left – the image looks solid.

7. What does the user receive?

As a result, the system returns two results at once:

{

"original": "...",

"object": "...",

"clean": "..."

}

Where:

- object – a cut-out object without a background

- clean – image without this object

And these are ready-made files that can be used further without any modifications.

How it works technically

Frontend

- Vue 3

- Canvas

Canvas is not used as the main rendering engine, but as an auxiliary tool:

- to select an area on an image

- to fix the coordinates (points) selected by the user

- to simplify the transfer of this data for processing

In this case, Canvas does not work directly with the image itself, but serves as an interaction layer – collecting points by which the area of interest is determined.

How does this work

- The user selects an area on the image

- The coordinates of the selected zone are recorded via Canvas

- This data is fed into the segmentation model ( SAM2 )

- The model returns the object's mask

- The mask is applied over the original image as a second layer.

- As a result, the user sees the outline/area that the model has defined.

Backend

- Python

- responsible for the entire pipeline

- processes images

- calls AI models

AI part

- SAM 2 – selects an object

- LaMa – restores the background

The whole process is divided into stages:

- segmentation

- extraction

- recovery

This is important because:

- you can change models

- the system can be scaled

- individual parts can be improved

What is important in implementation

- It's not just "cutting out a picture"

The system actually understands where the object is, and doesn't just cut out a rectangle. - The object retains its geometry

It can be inserted back or used further without distortion. - Minimum user interaction

There is no need to outline the contour – just select the area. - Works without training for the task

There is no need to collect a dataset for each type of product.

Performance

Depends on:

- image size

- complexity of the object

- server load

What it looks like in reality:

- simple objects - fast

- complex scenes - a little longer, but stable

Restrictions

There are situations where the result may be worse:

- the object merges with the background (low contrast)

- several objects intersect

- very complex textures (fine details)

How can I use the tool?

The current version solves the basic problem of precise scene selection, extraction, and cleanup. However, the architecture was designed from the outset to serve as a core tool for other solutions, not a final product.

Practical use

- Preparing content for e-commerce

— automatic product cropping from photos

— preparing images for cards

— background cleaning without a designer - Generating a catalog

— one source → different presentation options

— transferring an object to other backgrounds

— compilation of visuals without photo sessions - Scene cleaning

— removal of unnecessary objects (furniture, items)

— preparation of "clean" interiors

— work with real-life photos - Basis for configurators

— the selected object can be used as a layer

— then change the color, materials, textures

— integration with 2D/3D configurators - Preparing data for AI

— segmented objects → input for generative models

— training/fine-tuning

— creation of datasets

How can it be improved?

The architecture allows for functionality to be expanded without rewriting the system.

Auto-detection (without bbox)

Currently the user specifies the area manually.

The next step is to automatically detect objects in the scene:

- list of objects

- click to select

- working as with a "smart scene"

Batch processing

Processing not just one image, but a bunch of them:

- loading catalog

- mass segmentation

- automatic unloading

Relevant for e-commerce and production.

Integration with generative models

After segmentation you can:

- change materials and colors

- generate variations

- create new scenes

That is, to transform the tool into a full-fledged AI configurator.

Working with 3D/depth

Adding depth analysis:

- understanding the depth of the scene

- correct insertion of objects

- transition to 3D configuration

Integration into business processes

— CRM (image transfer)

— CMS (content management)

— e-commerce (product cards)

The tool becomes part of the pipeline, not a separate service.

Improving accuracy

— further processing of masks

— hybrid segmentation models

— adaptation to specific categories (furniture, clothing, etc.)

Result

A tool has been implemented that:

- accurately selects an object without manual marking

- extracts it without losing geometry

- removes an object with correct background restoration

- returns ready data for further use

Essentially, this is the base layer for any system that requires working with an image as a structured scene, rather than as a "picture."

Would you like to implement such a tool?

We'll develop a solution tailored to your needs: from basic segmentation to full integration into your website, CRM, or e-commerce.

We'll show you how it will work in your specific case and what tasks it will solve.

👉 Submit a request – we'll analyze the task and offer a solution tailored to your business